AI Laws and Regulation in the United States (2026)

Artificial intelligence regulation in the United States is evolving at an unprecedented pace. While Congress has not passed a comprehensive federal AI law, a patchwork of executive orders, targeted federal statutes, and aggressive state-level legislation is shaping how AI systems can be developed, deployed, and used across the country.

This guide covers the current state of AI regulation at every level of government, from federal executive orders and the NIST framework to state-specific laws governing everything from deepfakes to automated hiring decisions.

AI Regulation Timeline

Key milestones in AI development and regulation. Filter by category to focus on what matters to you.

2022

2023

2024

2025

2026

Timeline data is updated regularly. Click any milestone to expand details.

Federal AI Regulation

The federal approach to AI regulation has swung dramatically between administrations, leaving businesses and developers navigating an uncertain landscape.

Biden's AI Executive Order (2023)

On October 30, 2023, President Biden signed Executive Order 14110, titled "Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence." The order directed over 50 federal entities to take more than 100 specific actions across eight policy areas, including AI safety, innovation, worker protections, equity, and privacy.

Key provisions included requiring companies training AI models above certain computational thresholds to report to the federal government under Defense Production Act authority. The order directed NIST to develop safety guidelines and red-teaming standards, and required federal agencies to designate Chief AI Officers.

EO 14110 represented the most sweeping federal action on AI to date, but it remained an executive order rather than legislation, making it vulnerable to reversal by a future president.

Trump Revokes and Replaces (2025)

On January 23, 2025, President Trump signed Executive Order 14179, "Removing Barriers to American Leadership in Artificial Intelligence," revoking Biden's EO 14110 on his first day in office. The new order declared U.S. policy to "sustain and enhance America's global AI dominance" and shifted the federal stance from safety oversight to deregulation and innovation.

Trump followed with EO 14365 on December 11, 2025, titled "Ensuring a National Policy Framework for Artificial Intelligence." This order directly targets state AI laws by:

- Establishing a DOJ AI Litigation Task Force to challenge state AI laws on constitutional and preemption grounds

- Directing the Secretary of Commerce to identify state laws deemed "problematic" within 90 days

- Threatening to withhold federal broadband funding (BEAD Program) from states with conflicting AI rules

- Directing the FTC and FCC to consider federal standards that could preempt state-level regulations

Legal scholars note that an executive order cannot override state law without congressional action or a court ruling. States retain their regulatory authority unless and until Congress acts or courts strike down specific laws.

TAKE IT DOWN Act (P.L. 119-12)

The TAKE IT DOWN Act, signed on May 19, 2025, is the first federal law directly regulating AI-generated content. The law criminalizes the nonconsensual publication of intimate images, including AI-generated deepfakes.

Criminal penalties under the Act:

| Offense | Adults | Minors |

|---|---|---|

| Publishing intimate images/deepfakes | Up to 2 years | Up to 3 years |

| Threatening to publish | Up to 18 months | Up to 30 months |

Covered platforms must establish notice-and-takedown processes and remove reported content within 48 hours. The platform compliance deadline is May 19, 2026 (one year from enactment). The FTC enforces violations as unfair or deceptive acts under the FTC Act.

One Big Beautiful Bill Act (July 2025)

The One Big Beautiful Bill Act, signed July 4, 2025, originally contained a 10-year moratorium on state and local AI regulation. The Senate stripped this provision in a 99-1 vote on July 1, 2025, preserving states' authority to regulate AI.

AI provisions that survived in the final law focus on defense and military applications. These include $450 million for naval shipbuilding AI and autonomous robotics, $250 million for AI ecosystem advancement through the Department of Defense, $250 million for Cyber Command AI expansion, and $200 million for DOD financial audit automation using AI.

NIST AI Risk Management Framework

The National Institute of Standards and Technology has published the most influential federal AI guidance through its voluntary framework:

| Document | Published | Status |

|---|---|---|

| AI RMF 1.0 | January 26, 2023 | Final |

| AI 600-1 (Generative AI Profile) | July 26, 2024 | Final |

| IR 8596 (Cyber AI Profile) | December 16, 2025 | Draft |

The AI RMF 1.0 provides a voluntary framework for managing AI risks. Several state laws, including Colorado's AI Act, offer safe harbor provisions for organizations that substantially comply with the NIST framework.

State AI Laws: A 50-State Patchwork

State legislatures have moved aggressively to regulate AI where Congress has not. In 2025, all 50 states plus Washington D.C., Puerto Rico, and the U.S. Virgin Islands introduced AI legislation. Thirty-eight states enacted roughly 100 AI-related measures that year.

Colorado: First Comprehensive State AI Law

Colorado SB 24-205, the Consumer Protections for Artificial Intelligence Act, was signed on May 17, 2024. It was the first state law to broadly regulate AI systems that make "consequential decisions" affecting consumers.

The law defines high-risk AI as any system that makes or substantially factors into decisions about employment, education, financial services, healthcare, housing, insurance, or legal services.

Key requirements include:

- Developers must use "reasonable care" to prevent algorithmic discrimination

- Deployers must implement risk management policies and conduct annual impact assessments

- Consumers must be notified when AI makes consequential decisions about them

- Discovered algorithmic discrimination must be disclosed to the Attorney General within 90 days

Enforcement begins June 30, 2026, with violations treated as deceptive trade practices under the Colorado Consumer Protection Act. Organizations that comply with the NIST AI RMF have an affirmative defense.

California: Multiple AI Laws Take Effect

California has enacted several targeted AI statutes rather than a single comprehensive law:

SB 53 (Frontier AI Safety): Signed September 29, 2025. Requires large frontier AI developers to publish safety frameworks, report critical safety incidents to the Office of Emergency Services within 15 days, and protect whistleblowers who disclose catastrophic risks. Civil penalties up to $1 million per violation.

SB 942 (AI Transparency Act): Effective January 1, 2026. Providers with over 1 million monthly California users must offer free AI detection tools and embed machine-readable disclosure metadata in AI-generated content. Penalties of $5,000 per violation per day.

AB 2013 (Training Data Disclosure): Effective January 1, 2026. Developers must publicly document training datasets, including data types, copyright status, and whether personal information was used.

FEHA AI Employment Rules: Effective October 1, 2025. The California Civil Rights Department requires employers to audit AI tools used in hiring, preserve ADS-related records for four years, and maintains employer liability for discriminatory AI used by vendors or third parties.

Texas: TRAIGA

Texas HB 149, the Texas Responsible AI Governance Act, took effect January 1, 2026. The law prohibits AI systems designed to manipulate human behavior, discriminate against protected classes, produce child exploitation material, or infringe constitutional rights.

Penalties range from $10,000 to $12,000 per curable violation and $80,000 to $200,000 per uncurable violation, with daily penalties of $2,000 to $40,000 for continuing violations. The Texas Attorney General has exclusive enforcement authority.

The law also creates the Texas AI Council (7 members appointed by the governor) and a regulatory sandbox allowing 36-month testing periods. Organizations that substantially comply with the NIST AI RMF receive safe harbor protections.

Illinois: AI and Employment Discrimination

Illinois HB 3773 (Public Act 103-0804), effective January 1, 2026, amends the Illinois Human Rights Act to make it a civil rights violation to use AI that discriminates based on protected classes in employment decisions.

The law specifically prohibits using zip codes as a proxy for race in predictive analytics. Employers must notify employees and applicants when AI is used for employment decisions, including recruitment, hiring, promotion, and discharge.

New York City: Automated Hiring Tool Audits

NYC Local Law 144, enforced since July 5, 2023, requires employers using automated employment decision tools (AEDTs) to obtain independent bias audits annually, testing for disparate impact based on sex, ethnicity, and race.

Employers must notify candidates at least 10 business days before using an AEDT and disclose which job qualifications the tool assesses. Violations carry penalties of $500 for first offenses and $500 to $1,500 for subsequent violations, with each day of non-compliant use counted as a separate violation.

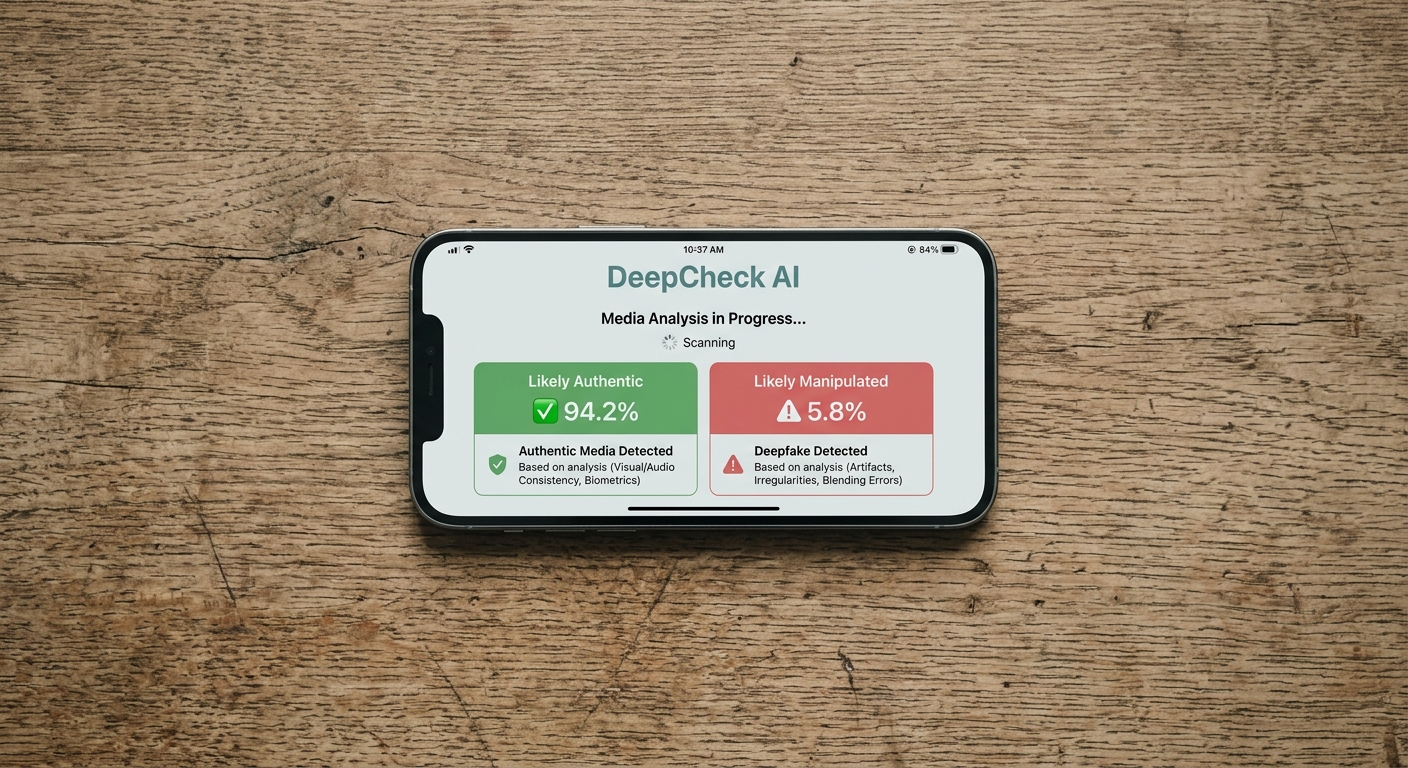

Deepfake Laws Across the States

Deepfake regulation has expanded faster than any other AI policy area. As of mid-2025, 47 states have enacted deepfake legislation. Only Alaska, Missouri, and Ohio lack deepfake laws. In 2025 alone, states enacted 64 new deepfake-related laws, a 23% increase over 2024.

State deepfake laws generally fall into two categories:

Sexually explicit deepfakes: 46 states address nonconsensual intimate deepfakes, up from 32 at the start of 2025. California leads with 18 deepfake-related statutes, followed by Texas (10), New York (8), and Utah (8).

Election deepfakes: 28 states regulate AI-generated content in political communications. The issue gained urgency in March 2026 when the National Republican Senatorial Committee released a fully AI-generated video of a Texas Senate candidate, intensifying the national debate over AI in elections.

AI in Employment

AI-powered hiring tools, resume screeners, and employee monitoring systems are subject to growing regulation. Multiple jurisdictions now require employers to audit, disclose, or limit how AI factors into employment decisions.

| Jurisdiction | Law | Effective | Key Requirement |

|---|---|---|---|

| New York City | Local Law 144 | Jul 2023 | Annual independent bias audit |

| California | FEHA AI Rules | Oct 2025 | Bias testing, 4-year record retention |

| Illinois | HB 3773 | Jan 2026 | Civil rights violation for discriminatory AI |

| Colorado | SB 24-205 | Jun 2026 | Impact assessments, algorithmic discrimination prevention |

| Texas | HB 149 | Jan 2026 | Prohibits intentional AI discrimination |

California's rules are the most detailed, banning discriminatory automated decision systems in employment and requiring proactive bias testing, human oversight, and four-year record retention of AI audit data. Illinois takes a broader approach by treating AI discrimination as a standard civil rights violation under the Human Rights Act.

Healthcare AI Regulation

In 2025, 47 states introduced healthcare AI bills, making it the most legislatively active healthcare topic at the state level.

Texas leads with SB 1188 (effective September 1, 2025), requiring healthcare practitioners to disclose AI use in diagnosis and treatment and imposing civil penalties of $5,000 to $250,000 per violation.

California's AB 3030 (effective January 1, 2025) requires health facilities to notify patients when generative AI communicates clinical information, with specific formatting rules for written, chat, audio, and video communications. AB 489 (effective January 2026) prohibits AI systems from implying users are receiving care from licensed providers unless that oversight actually exists.

At the federal level, the FDA has authorized 1,451 cumulative AI/ML-enabled medical devices through 2025, with 2025 setting a record of 295 device clearances. Radiology accounts for 76% of all authorized AI medical devices.

Federal vs. State: The Preemption Battle

The dominant AI policy story of 2025-2026 is the tension between federal deregulation and state regulation.

The Trump administration's December 2025 executive order (EO 14365) directly targets state AI laws, with the Colorado AI Act called out by name. The order creates mechanisms to challenge state laws through litigation, conditional funding, and potential federal standards that could override state rules.

However, executive orders cannot repeal state statutes. That requires either an act of Congress or a court ruling. The Senate's 99-1 vote in July 2025 to strip the AI moratorium from the Big Beautiful Bill demonstrates bipartisan resistance to federal preemption of state AI authority.

States continue to legislate aggressively despite the federal pressure. The result is a regulatory patchwork that creates compliance challenges for companies operating across state lines, but also ensures that AI oversight continues even without federal legislation.

AI in Government and the DOGE Controversy

The Department of Government Efficiency (DOGE) has deployed AI tools to review an estimated 200,000 federal regulations, claiming to recommend cuts to roughly 100,000 of them. The initiative claims a 93% reduction in labor hours for regulatory review.

Federal agencies including the GSA have deployed generative AI chatbots using models from Anthropic and Meta. However, House Democrats have raised concerns about unauthorized AI use, data security breaches, and the potential for AI systems to misinterpret statutes when making deregulatory recommendations.

The debate over AI in government operations highlights a broader tension: while AI can increase efficiency and reduce costs, deploying it for consequential government decisions raises questions about accountability, transparency, and due process.

What to Watch in 2026

Several developments will shape AI regulation through the rest of 2026:

Colorado AI Act enforcement begins June 30, 2026, providing the first test of a comprehensive state AI law in practice.

TAKE IT DOWN Act platform compliance kicks in May 19, 2026, requiring social media platforms to establish deepfake removal systems.

EU AI Act high-risk requirements take effect August 2, 2026, potentially influencing U.S. companies with global operations.

2026 midterm elections will test state deepfake laws as AI-generated campaign content becomes increasingly common.

Federal AI legislation may advance as the Trump administration issues legislative recommendations for a federal framework, though bipartisan consensus on the scope of federal AI law remains elusive.

Sources and References

- Executive Order 14110 on Safe, Secure, and Trustworthy AI(nist.gov).gov

- Executive Order 14179: Removing Barriers to American Leadership in AI(whitehouse.gov).gov

- EO 14365: Ensuring a National Policy Framework for AI(federalregister.gov).gov

- TAKE IT DOWN Act (S.146)(congress.gov).gov

- NCSL: Artificial Intelligence 2025 Legislation(ncsl.org)

- Colorado SB 24-205: Consumer Protections for AI(leg.colorado.gov).gov

- California SB 53: Frontier AI Safety(leginfo.legislature.ca.gov).gov

- California SB 942: AI Transparency Act(leginfo.legislature.ca.gov).gov

- California AB 2013: Training Data Disclosure(leginfo.legislature.ca.gov).gov

- Texas HB 149: Responsible AI Governance Act(capitol.texas.gov).gov

- NYC Automated Employment Decision Tools(nyc.gov).gov

- NIST AI Risk Management Framework(nist.gov).gov

- California AB 3030: Healthcare AI Disclosure(leginfo.legislature.ca.gov).gov

- FDA AI/ML Software as Medical Device(fda.gov).gov

- State Deepfake Legislation Tracker(ballotpedia.org)

- 47 States Introduced Healthcare AI Bills in 2025(beckershospitalreview.com)