Texas AI Laws and Regulation (2026)

Overview of Texas AI Laws

Texas has established itself as a major force in state-level artificial intelligence regulation. During the 89th Legislative Session in 2025, the Texas Legislature passed a comprehensive suite of AI laws that address everything from broad AI governance to healthcare-specific requirements, deepfake protections, and government AI oversight.

The centerpiece of Texas's AI regulatory framework is the Texas Responsible Artificial Intelligence Governance Act (TRAIGA), signed by Governor Greg Abbott on June 22, 2025. TRAIGA makes Texas the second state, after Colorado, to enact comprehensive AI legislation. However, Texas took a distinctly different approach from Colorado by focusing on intent-based liability rather than strict liability for discriminatory outcomes.

Texas's approach reflects the state's traditionally business-friendly regulatory philosophy. TRAIGA provides multiple safe harbor provisions, creates a regulatory sandbox for innovation, and vests enforcement authority exclusively in the Attorney General rather than creating private rights of action. At the same time, the state has enacted aggressive protections against deepfakes, imposed healthcare AI disclosure requirements, and established government AI oversight mechanisms.

This article covers all enacted and pending Texas AI legislation, including TRAIGA, healthcare AI laws, deepfake protections, and government AI regulations. This information is current as of March 2026, but you should consult a licensed Texas attorney for advice specific to your situation.

Texas Responsible AI Governance Act (TRAIGA): HB 149

The Texas Responsible Artificial Intelligence Governance Act, enacted as HB 149, is the most significant AI law passed by the Texas Legislature. Governor Abbott signed the bill on June 22, 2025, and it took effect on January 1, 2026.

Scope and Definitions

TRAIGA defines an "artificial intelligence system" as any machine-based system that, for any explicit or implicit objective, infers from its inputs how to generate outputs including content, decisions, predictions, or recommendations that can influence physical or virtual environments. This broad definition captures most modern AI tools, from large language models to automated decision-making systems.

The law applies to any entity that develops or deploys an AI system in Texas, advertises or promotes products or services in the state, conducts business in Texas, or offers products or services used by Texas residents.

Prohibited AI Practices

TRAIGA prohibits several categories of AI use. Under the law, it is illegal to develop or deploy AI systems that:

- Are intentionally aimed at inciting or encouraging self-harm or criminal activity

- Produce child sexual abuse material (CSAM) or deepfake pornography

- Engage in text-based conversations that simulate or describe sexual content while impersonating a child

- Use "social scoring" by government entities to categorize individuals for detrimental treatment

- Intentionally discriminate against protected classes under federal or state law

- Use biometric data from publicly available sources to uniquely identify persons without consent

Intent-Based Liability Framework

TRAIGA's most significant distinction from other state AI laws is its intent-based liability framework. Unlike Colorado's AI Act, which creates liability based on discriminatory outcomes (disparate impact), Texas requires proof of intentional misconduct. A company that inadvertently produces biased results through its AI system would not automatically violate TRAIGA, as long as there was no intent to discriminate.

This approach gives businesses clearer compliance guidelines. As long as a company acts in good faith and does not deliberately design or use AI systems to cause harm, it faces lower legal risk under TRAIGA compared to impact-focused regulations.

Enforcement and Penalties

TRAIGA does not create a private cause of action. Only the Texas Attorney General can enforce the law, and civil penalties range from $10,000 to $200,000 per violation. Violations can accrue on a continuing daily basis, meaning ongoing noncompliance can result in rapidly escalating penalties.

Before initiating enforcement action, the Attorney General must provide written notice to the alleged violator, giving the company 60 days to cure the violation and implement policy changes necessary to prevent further violations. This cure period provides companies with an opportunity to correct issues before facing penalties.

| Enforcement Detail | Requirement |

|---|---|

| Enforcement authority | Texas Attorney General (exclusive) |

| Civil penalty range | $10,000 to $200,000 per violation |

| Penalty accrual | Can accrue daily for continuing violations |

| Notice requirement | Written notice before action |

| Cure period | 60 days to fix and prevent recurrence |

| Private right of action | None |

Texas AI Council

TRAIGA creates the Texas Artificial Intelligence Council, a seven-member advisory body tasked with studying AI-related issues, making policy recommendations, and overseeing the regulatory sandbox program. The Council may issue non-binding reports and guidance but does not have rulemaking authority.

The Council's responsibilities include monitoring AI technology developments, advising state agencies on AI policy, evaluating the effectiveness of TRAIGA's provisions, and recommending legislative updates as the technology evolves. The advisory nature of the Council reflects Texas's preference for limited government intervention in technology markets.

Regulatory Sandbox Program

One of TRAIGA's most innovative features is the regulatory sandbox program, administered by the Texas Department of Information Resources (DIR) in consultation with the AI Council.

How the Sandbox Works

The sandbox allows approved participants to develop and test AI systems in a controlled environment, temporarily exempt from certain state licensing and regulatory requirements, for up to 36 months. This creates a structured pathway for businesses to experiment with novel AI applications without the immediate risk of regulatory penalties.

Key features of the sandbox include:

- Duration: Up to 36 months of regulatory flexibility

- Oversight: Administered by DIR with AI Council consultation

- Protections retained: Prohibitions on manipulation, discrimination, and unlawful content remain in force even within the sandbox

- Innovation focus: Designed to let companies test AI applications that might otherwise be impractical under existing regulatory frameworks

Limitations

The sandbox does not exempt participants from TRAIGA's core prohibitions. Companies operating in the sandbox must still comply with bans on discriminatory AI, CSAM generation, and other prohibited uses. The sandbox applies only to certain licensing and regulatory requirements that might otherwise prevent testing of innovative AI applications.

NIST Safe Harbor Provisions

TRAIGA provides multiple safe harbor provisions that incentivize companies to adopt responsible AI practices. A company is not liable under TRAIGA if it meets any of the following conditions:

- Third-party misuse: A third party misuses the AI system in a manner that TRAIGA prohibits, and the developer or deployer did not facilitate that misuse

- Internal discovery: The company discovers a violation through its own testing, including adversarial testing and red team exercises, and takes corrective action

- Framework compliance: The company substantially complies with the NIST AI Risk Management Framework or other recognized standards

The NIST safe harbor is particularly significant because it provides a concrete, actionable compliance standard. Companies that document their adherence to the NIST framework, conduct adversarial testing, and maintain audit trails can build strong defenses against enforcement actions.

What NIST Compliance Requires

To qualify for the NIST safe harbor, companies should implement processes that align with the NIST AI Risk Management Framework (AI RMF 1.0), including:

- Regular risk assessments of AI systems

- Documentation of AI system design decisions and known limitations

- Ongoing monitoring for bias, accuracy, and safety

- Stakeholder engagement and transparency reporting

- Incident response procedures for AI failures

Healthcare AI: SB 1188

Senate Bill 1188, signed by Governor Abbott on June 20, 2025, with an effective date of September 1, 2025, introduces specific requirements for healthcare providers using AI in diagnostic contexts.

Disclosure Requirements

Healthcare practitioners who use AI for diagnostic purposes, including AI-powered recommendations for diagnosis or treatment based on patient medical records, must disclose their use of AI to patients. The disclosure can be verbal or written but must clearly inform patients that AI is being used for care-related purposes.

Conditions for AI Use in Healthcare

Healthcare practitioners may use AI for diagnostic purposes only if they meet all of the following conditions:

- The practitioner discloses their use of AI to patients

- The practitioner uses AI within the scope of their license, certification, or authorization

- The use of AI is not otherwise restricted or prohibited by applicable state or federal law

- The practitioner reviews all records created with AI in a manner consistent with medical records standards developed by the Texas Medical Board

Electronic Health Record Protections

SB 1188 also prohibits the physical offshoring of electronic medical records, requiring that patient health data remain within the United States. This provision addresses growing concerns about AI companies processing medical data through overseas servers.

Penalties

The Texas Attorney General is authorized to seek injunctive relief and civil penalties ranging from $5,000 to $250,000 per violation, depending on the nature and severity of the violation.

| Healthcare AI Requirement | Detail |

|---|---|

| Patient disclosure | Required before AI-assisted diagnosis |

| Scope limitation | Must be within practitioner's license |

| Record review | AI-created records must be reviewed per TMB standards |

| Data localization | EHRs cannot be offshored |

| Penalty range | $5,000 to $250,000 per violation |

Deepfake Laws

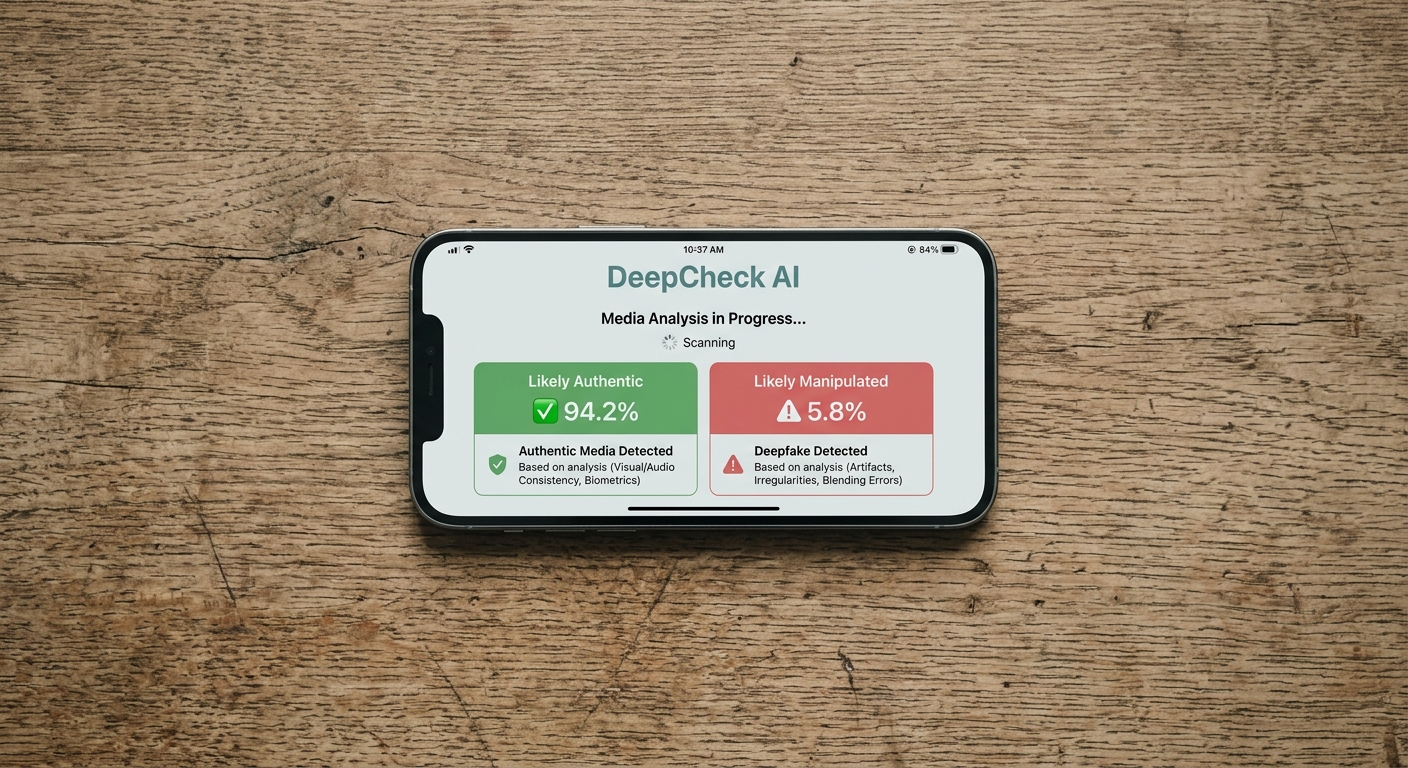

Texas has been a pioneer in deepfake legislation, and the 89th Legislative Session significantly expanded the state's deepfake protections.

Sexually Explicit Deepfakes: Penal Code Section 21.165

Under Texas Penal Code Section 21.165, it is illegal to knowingly produce or distribute deepfake media depicting a person with visible intimate parts or engaging in sexual conduct without their effective consent.

| Offense | Classification | Maximum Penalty |

|---|---|---|

| Production or distribution without consent | Class A misdemeanor | Up to 1 year jail, $4,000 fine |

| Prior conviction or victim under 18 | Third-degree felony | 2 to 10 years prison |

| Threatening to produce or distribute | Class B misdemeanor | Up to 180 days jail |

| Threats involving victim under 18 | Class A misdemeanor | Up to 1 year jail |

SB 441: Expanded Civil Liability for Intimate Deepfakes

Senate Bill 441, signed by Governor Abbott on June 20, 2025, with an effective date of September 1, 2025, creates comprehensive civil liability for nonconsensual intimate deepfakes. The law allows victims to seek civil damages against:

- Individuals who create nonconsensual intimate deepfakes

- Websites that host such content

- Payment processors that manage payment systems for sites hosting nonconsensual deepfake content

SB 441 also strengthens requirements for obtaining consent before creating intimate visual material, ensuring that consent is documented and informed.

HB 3133: Platform Removal Requirements

House Bill 3133, signed on June 20, 2025, requires social media platforms to provide easily accessible and timely complaint systems for reporting alleged sexually explicit deepfakes and mechanisms for removing such material. Platforms that fail to comply face enforcement under Texas consumer protection laws.

HB 581: Age Verification for AI Sexual Content Tools

House Bill 581, signed on June 20, 2025, requires operators of websites with publicly available tools capable of creating "artificial sexual material harmful to minors" to implement reasonable age verification methods. This provision targets AI image generators that could be used to create harmful content involving minors.

Political Deepfakes: Election Code and HB 366

Texas was the first state in the nation to enact legislation restricting deepfakes in campaign advertisements. The original 2019 law made it illegal to create a deepfake video with intent to injure a candidate or influence an election result within 30 days of an election. Violations carry up to one year in county jail and a $4,000 fine.

However, the original law had significant limitations: it applied only to video content, required proof of intent, and was restricted to the 30-day pre-election window. It also covered only state-level races, not federal contests.

House Bill 366, sponsored by former House Speaker Dade Phelan, addressed these gaps. The bill passed both chambers and requires any political advertising that uses altered images, audio, or video, including AI-generated content, to include a disclosure stating the content did not occur in reality. The requirement applies to officeholders, candidates, or political committees that spend more than $100 on political advertising.

Failure to include the required disclosure is a Class A misdemeanor, punishable by up to one year in jail and a fine of up to $4,000. The Texas Ethics Commission determines the specific rules for disclosure format, including font size, color, and placement.

Government AI Regulation: SB 1964

Senate Bill 1964, signed by Governor Abbott on June 20, 2025, with an effective date of September 1, 2025, establishes a comprehensive framework for government use of AI in Texas.

Key Requirements

SB 1964 requires the Department of Information Resources (DIR) to maintain an inventory of AI systems used by state agencies and to create an AI code of ethics for state and local governments. The code of ethics must address:

- Human oversight of AI decision-making

- Fairness and non-discrimination

- Transparency in AI operations

- Data privacy protections

- Accountability mechanisms

- Regular evaluation of AI system performance

Heightened Scrutiny Classification

The bill establishes a tiered classification system for government AI, including a "heightened scrutiny" category for AI systems that autonomously influence consequential decisions such as benefit eligibility, licensing, or other government actions that significantly affect individuals.

Advisory Board

An eight-member advisory board assists state agencies with AI development and deployment, providing guidance on responsible AI use within government operations.

AI and Employment in Texas

TRAIGA applies to AI systems used in employment contexts, but Texas's approach differs significantly from states like Illinois and New York City that have enacted specific AI hiring regulations.

What TRAIGA Requires for Employers

TRAIGA prohibits developing or deploying an AI system with the intent to discriminate against a protected class under federal or state law. However, disparate impact alone, without evidence of intentional discrimination, does not violate TRAIGA.

The law does not require:

- Disclosure to job applicants or employees about AI use in hiring

- Mandatory bias audits of AI hiring tools

- Impact assessments for automated employment decision tools

Only state agencies and healthcare providers have mandatory AI disclosure obligations under Texas law. Private employers using AI for hiring, screening, or performance evaluation have no specific disclosure requirements under TRAIGA.

Practical Compliance for Employers

While not legally required, Texas employers should consider implementing AI policies and auditing their AI hiring tools. The Attorney General's enforcement authority means companies should retain meaningful human oversight over AI outputs that influence employment decisions and periodically reassess tools for bias or unintended discriminatory effects.

Federal AI Policy and Texas

Executive Order 14365

President Trump's Executive Order 14365, signed December 11, 2025, aims to establish a national AI policy framework and reduce diverging state regulations. The order creates a DOJ AI Litigation Task Force empowered to challenge state AI laws on constitutional grounds, including arguments based on the Commerce Clause and federal preemption.

Texas's Position

Texas's TRAIGA was designed with potential federal preemption challenges in mind. The law's business-friendly approach, intent-based liability standard, and lack of private right of action align with the federal government's stated preference for minimally burdensome AI regulation.

TRAIGA's safe harbor provisions, particularly the NIST framework compliance defense, also align with federal standards, making the law less likely to be challenged as imposing conflicting or duplicative requirements.

Protected Categories

The executive order exempts several categories of state laws from potential preemption challenges, including child safety protections and state government AI procurement. Texas's deepfake laws protecting minors (HB 581, SB 441) and government AI oversight (SB 1964) fall within these protected categories.

Looking Ahead: Texas AI Regulatory Future

Texas's 89th Legislative Session produced an unprecedented volume of AI legislation, establishing the state as a leader in AI governance. TRAIGA's January 1, 2026 effective date means businesses are now actively implementing compliance programs.

Key developments to watch include:

- The Texas AI Council's initial guidance and recommendations

- The regulatory sandbox program's first participants and outcomes

- Attorney General enforcement priorities under TRAIGA

- Potential federal preemption challenges to state AI laws

- Whether Texas will expand employer AI disclosure requirements in future sessions

The tension between Texas's pro-business philosophy and the need for consumer protection will continue to shape the state's AI regulatory trajectory. TRAIGA's intent-based framework may prove influential as other states consider their own comprehensive AI legislation.

More Texas Laws

Explore other Texas law topics on Recording Law:

Sources and References

- HB 149 Bill Text - Texas Responsible AI Governance Act(capitol.texas.gov).gov

- HB 149 Bill History - Texas Legislature(capitol.texas.gov).gov

- SB 1964 Bill Analysis - Government AI Regulation(capitol.texas.gov).gov

- Texas Department of Information Resources - Technology Legislation(dir.texas.gov).gov

- SB 441 Deepfake Civil Liability - Texas Senate News(senate.texas.gov).gov

- HB 3133 Bill Text - Platform Deepfake Removal(capitol.texas.gov).gov

- SB 441 Bill Text - Intimate Deepfake Liability(capitol.texas.gov).gov

- Texas Responsible AI Governance Act - Norton Rose Fulbright(nortonrosefulbright.com)

- Texas Enacts Responsible AI Governance Act - Baker Botts(bakerbotts.com)

- TRAIGA Key Provisions - Greenberg Traurig(gtlaw.com)

- Navigating TRAIGA - Ropes and Gray(ropesgray.com)

- Texas AI Sandbox - American Bar Association(americanbar.org)

- HB 366 Political Ad AI Disclosure - Texas Tribune(texastribune.com)

- Texas Healthcare AI Disclosure - Texas Medical Association(texmed.org)

- New Texas AI Healthcare Laws - SB 1188 Compliance(tafp.org)

- Texas AI Employment Law - Berkshire Associates(berkshireassociates.com)

- 89th Legislature AI Bills - Jackson Walker(jw.com)

- TRAIGA Pared Back Version - K&L Gates(klgates.com)