California AI Laws and Regulation (2026)

California leads the nation in artificial intelligence legislation. The state has enacted dozens of AI-related laws covering frontier model safety, employment discrimination, healthcare transparency, deepfake content, training data disclosure, and civil liability. Governor Gavin Newsom signed at least seven AI bills into law during the 2025 legislative session, adding to a growing body of AI regulation that began in earnest during 2024.

This comprehensive guide covers every major California AI law currently in effect or taking effect soon, notable vetoed legislation, and the impact of federal AI policy on the state. This article is for informational purposes only. Consult an attorney for advice specific to your situation.

SB 1047: The Vetoed AI Safety Bill

Understanding California's current AI landscape requires starting with the bill that did not become law. SB 1047, the Safe and Secure Innovation for Frontier Artificial Intelligence Models Act, was authored by Senator Scott Wiener and passed both chambers of the legislature in 2024.

What SB 1047 Would Have Done

The bill targeted "covered models," defined as AI models costing more than $100 million to develop or requiring substantial computational power, as well as models fine-tuned on a covered model with an additional $10 million investment. It proposed mandatory safeguards including "kill switch" mechanisms for shutting down dangerous AI systems, rigorous testing and auditing protocols, enhanced cybersecurity protections, and the creation of a new state authority known as the Board of Frontier Models.

Most significantly, SB 1047 would have made technology companies legally liable for harms caused by their AI models.

The Veto

On September 29, 2024, Governor Newsom vetoed SB 1047. In his veto message, he stated that the bill "applied stringent standards to even basic functions, so long as they were deployed by large systems" without considering the specific risks of how an AI system is deployed, including whether it involves critical decision-making or sensitive data.

The veto drew strong reactions. The Center for AI Safety, Elon Musk, the Los Angeles Times editorial board, and Anthropic had all supported the bill. Meta, OpenAI, and House Speaker Nancy Pelosi opposed it, arguing it would hinder innovation.

The Legacy

While SB 1047 did not become law, it set the stage for SB 53 the following year. Senator Wiener took the feedback from the veto and drafted a narrower bill that focused on transparency rather than liability.

SB 53: Transparency in Frontier AI Act

Governor Newsom signed Senate Bill 53 into law on September 29, 2025, exactly one year after vetoing SB 1047. SB 53 creates the first enforceable state regulatory framework in the United States for the most advanced artificial intelligence systems.

Who Is Covered

SB 53 applies to "frontier models," defined as foundation models trained above 10^26 floating-point operations (FLOPs). The law also creates a category of "large frontier developers," defined as companies with annual revenues exceeding $500 million.

Safety Framework Requirements

Large frontier developers must publish an annual framework explaining the mechanisms they have in place to identify, mitigate, and govern catastrophic risks from their AI models. This framework must be publicly available and updated regularly.

Transparency Reports

Before deploying a new or substantially modified frontier model, all frontier developers (not just those classified as "large") must issue a transparency report. These reports must describe the model's capabilities, intended uses, known limitations, and results of risk assessments.

Critical Incident Reporting

Frontier developers must notify the California Office of Emergency Services (Cal OES) of critical safety incidents. Standard incidents must be reported within 15 days of discovery. Incidents posing imminent danger require notification within 24 hours.

Whistleblower Protections

Employers must maintain anonymous channels for reporting concerns regarding catastrophic risk. The law prohibits retaliation against employees or contractors who report safety concerns.

Penalties

Large frontier developers that fail to comply with their AI framework, make prohibited deceptive statements, or violate publication and reporting requirements may face civil penalties of up to $1 million per violation, depending on the severity of the offense. Most provisions of SB 53 took effect on January 1, 2026.

AI Transparency and Training Data Laws

California has enacted multiple laws requiring transparency about AI-generated content and the data used to train AI systems.

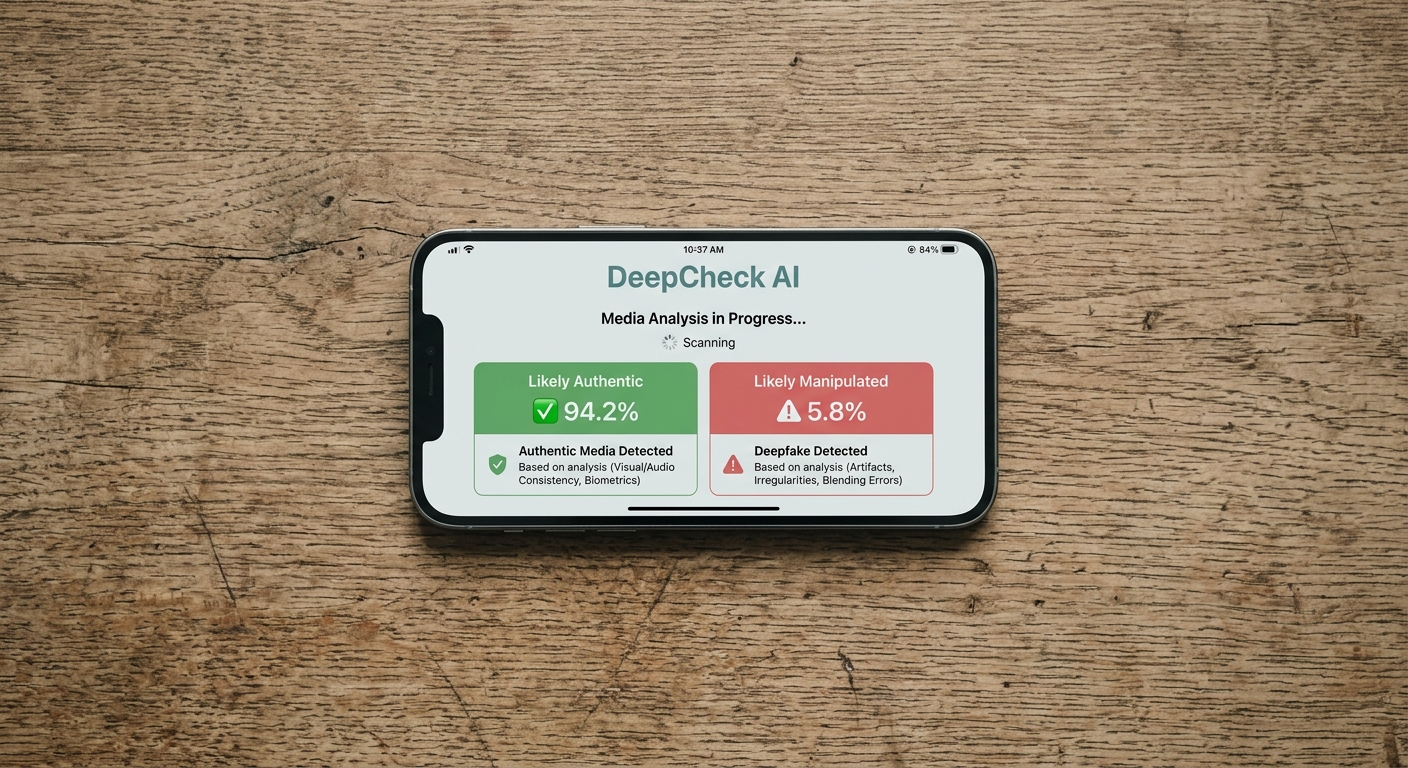

SB 942: California AI Transparency Act

Governor Newsom signed SB 942 into law on September 19, 2024. The law applies to "covered providers," defined as companies that produce generative AI systems with over 1 million monthly visitors or users that are publicly accessible within California.

The law imposes several requirements on covered providers.

AI Detection Tools: Covered providers must make available a free, publicly accessible AI detection tool that can identify content created by their systems.

Manifest Disclosures: Providers must offer users the option to include a visible disclosure in AI-generated image, video, or audio content that identifies the content as AI-generated. The disclosure must be clear, conspicuous, and understandable to a reasonable person.

Latent Disclosures: Providers must include latent (embedded, machine-readable) disclosures in AI-generated image, video, and audio content, to the extent technically feasible.

Third-Party Licensing: If a covered provider discovers that a third-party licensee has modified a licensed AI system to remove disclosure capabilities, the provider must revoke the license within 96 hours.

Violations carry a civil penalty of $5,000 per violation. Beginning January 1, 2027, hosting platforms must also comply with these requirements. An amendment signed October 13, 2025 (AB 853) extended certain compliance deadlines to August 2, 2026.

AB 2013: Training Data Transparency

By January 1, 2026, any developer releasing a new or substantially modified generative AI system in California must publish training data documentation on their website. The documentation must disclose whether training datasets include personal information or aggregate consumer information as defined in the California Consumer Privacy Act (CCPA).

AI in Employment: FEHA Regulations

California became the first state to regulate AI in employment decisions through its existing civil rights framework when the Civil Rights Council approved new regulations under the Fair Employment and Housing Act (FEHA) on June 27, 2025. These regulations took effect on October 1, 2025.

What Is Covered

The regulations apply to "automated decision systems" (ADS), defined as any computational process, including AI, machine learning, or other algorithms, that makes or helps make decisions regarding employees or job applicants.

Core Prohibitions

It is unlawful under FEHA to use any ADS or selection criteria that creates a disparate impact against a protected class. Protected classes include race, age, religious creed, national origin, gender, disability, and other characteristics specified under FEHA.

Anti-Bias Testing Requirements

ADS tools must undergo anti-bias testing or independent audits that are timely, repeatable, and transparent. A single validation at the time of launch is not sufficient. Fairness checks must be integrated as regular and systemized maintenance practices throughout the tool's use.

Transparency and Notice

Applicants and employees must receive both pre-use and post-use notices explaining when and how ADS tools are being used, what rights they have to opt out, and how to appeal or request human review.

Record Retention

Employers must retain ADS-related documentation for at least four years. This includes data inputs, outputs, decision criteria, audit results, and correspondence related to the tools.

Vendor Liability

The regulations expand the definitions of "agents" and "employment agencies." An agent now includes any person acting on behalf of an employer to perform functions traditionally exercised by the employer, such as recruitment and screening. This means employers cannot avoid FEHA liability by outsourcing hiring decisions to a vendor tool. If the tool is discriminatory, both the vendor and the employer may face claims.

Specific Application Areas

The regulations address online application technology, providing that employers cannot use tools that limit, screen out, rank, or prioritize applicants based on their schedule if doing so has a disparate impact based on religion, disability, or medical condition, unless the technology is job-related and consistent with business necessity and includes a mechanism to request an accommodation.

The regulations also classify certain ADS tools as "medical or psychological examinations." An ADS such as a test, question, puzzle, game, or other challenge that is likely to elicit information about a disability can be considered a medical inquiry under FEHA.

AI in Healthcare: AB 3030

Governor Newsom signed Assembly Bill 3030 on September 28, 2024. The law took effect on January 1, 2025, making it one of the earliest enforceable AI healthcare regulations in the country.

Disclosure Requirements

AB 3030 requires any health facility, clinic, or physician's office to notify patients when using generative AI to communicate "patient clinical information." The format of the disclosure varies by communication type.

| Communication Type | Disclosure Requirement |

|---|---|

| Written physical or digital communications | Prominent notice at the beginning of each communication |

| Chat-based or continuous online interactions | Notice prominently displayed throughout the interaction |

| Audio communications (including voicemails) | Verbal notice at the beginning and end of the interaction |

Exemptions

The law exempts communications that are read and reviewed by a licensed or certified human healthcare provider before being sent. Administrative and business communications such as appointment scheduling, check-up reminders, and billing are also exempt.

Penalties

Licensed health facilities that violate AB 3030 may face civil penalties of up to $25,000 per violation. For individual physicians, the Medical Board of California or the Osteopathic Medical Board of California has enforcement jurisdiction.

AB 489: AI Impersonation of Healthcare Professionals

Governor Newsom signed AB 489 on October 11, 2025, and the law took effect on January 1, 2026. AB 489 prohibits AI systems and related technologies from using specific terms, letters, or phrases that imply a user is receiving care from a licensed healthcare professional when no such human oversight exists.

This law complements AB 3030 by addressing a different angle of AI in healthcare. While AB 3030 requires disclosure when AI generates clinical communications, AB 489 prevents AI systems from claiming to be or implying they are licensed providers.

AI Civil Liability: AB 316

Governor Newsom signed Assembly Bill 316 on October 13, 2025, effective January 1, 2026. The law eliminates what some have called the "AI did it" defense in civil litigation.

What the Law Does

In any civil action where a defendant developed, modified, or used artificial intelligence alleged to have caused harm to the plaintiff, the defendant may not assert that the AI "autonomously" caused the harm. In other words, companies and individuals cannot shift blame to their AI systems to avoid civil liability.

What the Law Preserves

AB 316 does not eliminate all defenses. Defendants may still present other affirmative defenses, including evidence relevant to causation or foreseeability. They may also present evidence about the reasonable fault of any other party. The law simply prevents the specific defense that AI acted on its own without human responsibility.

Definition of AI Under AB 316

The law defines "artificial intelligence" broadly as "an engineered or machine-based system that varies in its level of autonomy and that can, for explicit or implicit objectives, infer from the input it receives how to generate outputs that can influence physical or virtual environments."

Deepfake Laws: Enacted and Challenged

California has attempted to regulate deepfakes in both sexual content and political contexts, with mixed legal results.

Election Deepfakes: AB 2655, AB 2839, and AB 2355

On September 17, 2024, Governor Newsom signed three bills targeting deepfakes in elections.

AB 2655 (Defending Democracy from Deepfake Deception Act) required large online platforms to block materially deceptive AI-generated content during the 120 days before an election, with disclosure requirements extending beyond that period.

AB 2839 prohibited the knowing distribution of deceptive AI-generated or manipulated election content within 120 days before and 60 days after an election. It allowed any person to sue for damages over election deepfakes.

AB 2355 mandated disclaimers for AI-generated political advertisements created by political committees.

Federal Court Challenges

Both AB 2655 and AB 2839 were struck down by federal Judge John Menendez. The court ruled AB 2655 was preempted by Section 230 of the Communications Decency Act, which protects online platforms from liability for user-generated content. On August 29, 2025, Judge Menendez also struck down AB 2839 as unconstitutional under the First Amendment, writing that the law "discriminates based on content, viewpoint, and speaker and targets constitutionally protected speech." He noted that "a mandatory disclaimer for parody or satire would kill the joke."

These rulings leave California without enforceable election deepfake laws, though AB 2355's disclaimer requirements for political committee advertisements may still stand separately.

Sexually Explicit Deepfakes

California's existing laws on nonconsensual intimate imagery and revenge pornography have been extended to cover AI-generated content. The state was among the first to address deepfake pornography through legislation.

Pending and Future AI Legislation

California's 2025 legislative session saw at least 33 AI-related bills introduced, with 16 passing the legislature. Governor Newsom signed seven and vetoed five. Ten additional AI bills have been carried over for consideration in the 2026 session.

Key Areas Under Consideration

Pending legislation covers algorithmic pricing (addressing concerns about AI-driven price fixing), AI bot identification requirements, high-risk automated decision-making in housing and education, AI and copyright protections, and additional anti-discrimination measures.

New bills may be introduced before the February 20, 2026, deadline for new legislation in the current session.

Federal AI Policy and California

California's extensive AI regulatory framework puts the state at the center of the debate over federal preemption of state AI laws.

Executive Order 14365

On December 11, 2025, President Trump issued Executive Order 14365, "Ensuring a National Policy Framework for Artificial Intelligence." The order established an AI Litigation Task Force within the Department of Justice empowered to challenge state AI laws and directed the Secretary of Commerce to evaluate state AI laws that may conflict with federal policy goals.

Potential Impact on California

California faces the most potential exposure to federal preemption challenges given the breadth of its AI legislation. However, several factors limit the immediate impact.

First, executive orders do not independently override state law. Federal preemption typically requires congressional action or court rulings. Second, the order carves out certain categories from potential preemption, including child safety protections and state government procurement and use of AI. Third, California's employment regulations under FEHA may be protected by the state's authority to enforce its own civil rights laws.

On March 20, 2026, the Trump Administration released its "National Policy Framework for Artificial Intelligence," calling on Congress to enact a unified federal AI standard. If Congress acts on these recommendations, some of California's AI laws could face preemption challenges. For now, all enacted California AI laws remain in full effect.

Summary of Key California AI Laws

| Law | Year | Subject | Effective Date |

|---|---|---|---|

| SB 1047 | 2024 | Frontier AI safety (VETOED) | N/A |

| AB 2013 | 2024 | Training data transparency | Jan. 1, 2026 |

| AB 3030 | 2024 | Healthcare AI disclosure | Jan. 1, 2025 |

| SB 942 | 2024 | AI content transparency/watermarking | Jan. 1, 2026 |

| AB 2655 | 2024 | Election deepfakes (STRUCK DOWN) | N/A |

| AB 2839 | 2024 | Election deepfake ads (STRUCK DOWN) | N/A |

| AB 2355 | 2024 | Political ad AI disclaimers | Signed Sept. 2024 |

| SB 53 | 2025 | Frontier AI transparency | Jan. 1, 2026 |

| AB 316 | 2025 | AI civil liability defense ban | Jan. 1, 2026 |

| AB 489 | 2025 | AI healthcare impersonation ban | Jan. 1, 2026 |

| AB 853 | 2025 | AI Transparency Act amendments | Extends deadlines to Aug. 2026 |

| FEHA regs | 2025 | AI employment discrimination | Oct. 1, 2025 |

More California Laws

- California Recording Laws

- [California Data Privacy Laws](/us-laws/data-privacy-laws/california-data-privacy-laws)

- California Surveillance Camera Laws

- California Background Check Laws

- California Whistleblower Laws

Sources and References

- Governor Newsom signs SB 53(gov.ca.gov).gov

- SB 53 Bill Text(leginfo.legislature.ca.gov).gov

- SB 942 California AI Transparency Act Bill Text(leginfo.legislature.ca.gov).gov

- Civil Rights Council AI Employment Regulations Approval(calcivilrights.ca.gov).gov

- AB 3030 GenAI Healthcare Notification Requirements(mbc.ca.gov).gov

- AB 316 Bill Text - AI Defenses(leginfo.legislature.ca.gov).gov

- Governor Newsom signs bills to combat deepfake election content(gov.ca.gov).gov

- SB 1047 Veto Message(gov.ca.gov).gov

- Executive Order on AI National Policy Framework(whitehouse.gov).gov

- NCSL Artificial Intelligence 2025 Legislation Tracker(ncsl.org)