Virginia AI Laws and Regulation (2026)

Overview of Virginia AI Laws

Virginia occupies a distinctive position in the national AI regulatory landscape. The state was a pioneer in addressing deepfake harms, becoming the first in the nation to criminalize deepfake revenge pornography in 2019. Governor Youngkin's Executive Order 30 established one of the most detailed state-level AI governance frameworks for government operations in 2024. Yet when the legislature passed a comprehensive AI regulation bill in 2025, the Governor vetoed it, and most AI bills in the 2026 session were tabled until 2027.

This tension between early action and regulatory restraint defines Virginia's approach to AI governance. The state has moved decisively on specific harms like deepfake pornography while resisting broader regulatory frameworks that could affect the technology industry.

This article covers Virginia's enacted AI-related laws, the vetoed comprehensive AI bill, executive branch AI governance, pending legislation, and how federal policy shapes the state's approach. This information is current as of March 2026, but you should consult an attorney for advice specific to your situation.

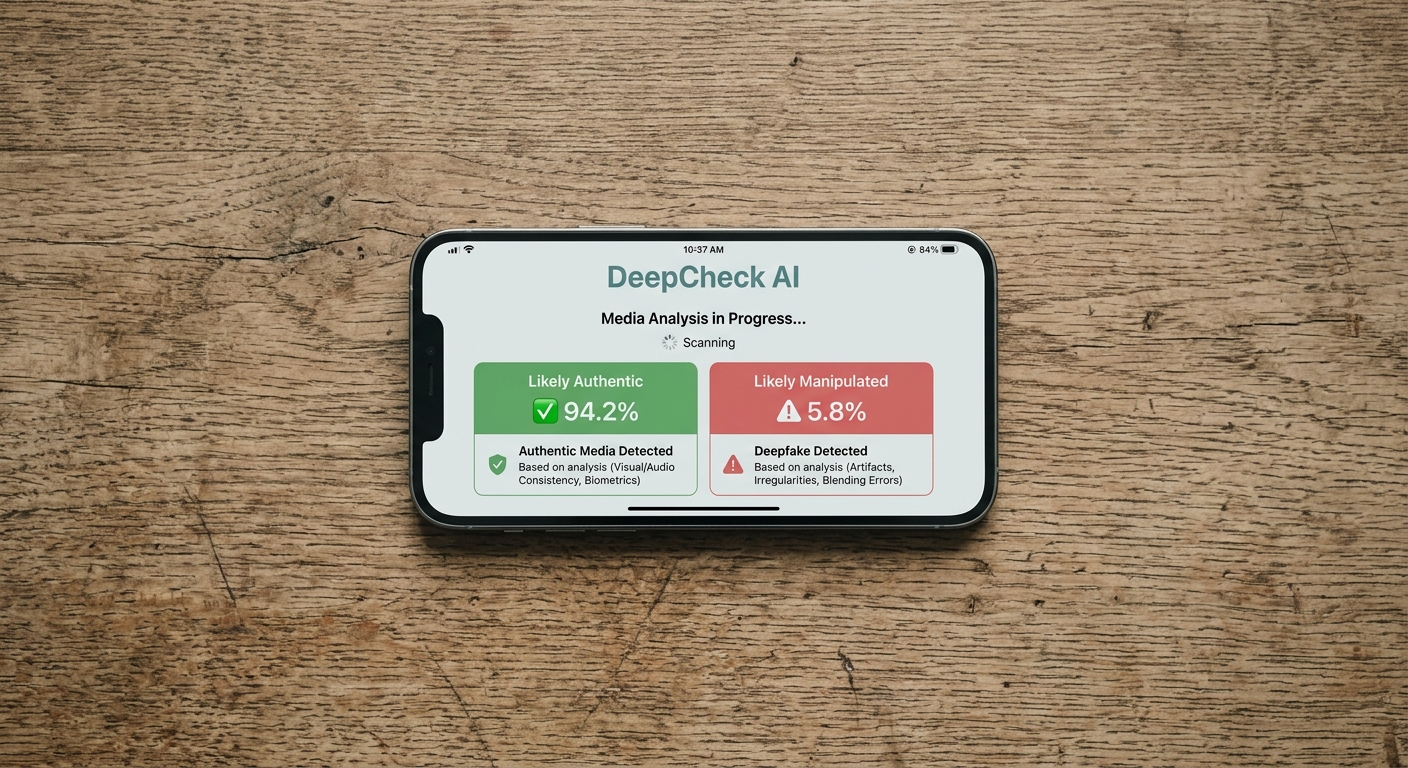

Deepfake Revenge Pornography: Va. Code Section 18.2-386.2

Virginia made history in 2019 when it became the first state to criminalize the distribution of deepfake revenge pornography. The state amended its existing nonconsensual pornography statute to explicitly cover AI-generated and digitally manipulated intimate images.

What the Law Covers

Va. Code Section 18.2-386.2 makes it unlawful to maliciously disseminate or sell any videographic or still image that depicts another person who is totally nude, in a state of undress, or engaged in a sexual act, without that person's consent, with the intent to coerce, harass, or intimidate.

The statute specifically covers deepfakes by including within its definition a person "whose image was used in creating, adapting, or modifying a videographic or still image with the intent to depict an actual person and who is recognizable as an actual person by the person's face, likeness, or other distinguishing characteristic."

Penalties

| Offense | Classification | Maximum Penalty |

|---|---|---|

| First offense | Class 1 misdemeanor | Up to 1 year in jail and/or $2,500 fine |

| Subsequent offenses or aggravating factors | Class 1 misdemeanor (enhanced) | Same classification, stronger prosecution basis |

Creation of Intimate Images

Virginia also has a companion statute, Va. Code Section 18.2-386.1, which addresses the unlawful creation of intimate images. Together, these statutes provide a framework for addressing both the creation and distribution of nonconsensual intimate deepfakes.

Significance

Virginia's early action on deepfake pornography set a precedent that many states have since followed. By 2026, at least 46 states had enacted some form of deepfake pornography legislation, many building on the framework Virginia established.

Executive Order 30: State Government AI Standards (2024)

On January 18, 2024, Governor Glenn Youngkin signed Executive Order 30, establishing comprehensive AI governance standards for Virginia state government. The order is one of the most detailed state-level AI executive orders in the country.

AI Policy Standards

EO 30 directs the Virginia Information Technologies Agency (VITA) to develop new AI Policy Standards that establish uniform guiding principles for all Executive Branch agencies. These standards must include:

- Guidance on the ethical use of AI

- A mandatory approval process for AI implementation

- Mandatory disclaimers to accompany agency products generated by AI

- Measures to protect personal data

Approval Process

Before deploying any AI capabilities, state agencies must submit an application to VITA that identifies and describes the AI technologies at the model level, including model inputs, output data type and structure, model algorithms, and data sets used for training. This level of detail goes beyond what most state AI executive orders require.

Permitted Use Cases

The executive order specifies that AI capabilities may only be used when they are the optimal choice to achieve positive outcomes for Virginia citizens, such as improving government services, reducing wait times, or limiting bureaucracy and delays. This creates a purpose-driven standard for state AI deployment.

Education Guidelines

EO 30 addresses AI in education, establishing that "education is ultimately a human endeavor" and that "AI should never fully replace teachers." This principle guides how Virginia's public schools and universities approach AI integration.

Law Enforcement Standards

The order directs the Secretary of Public Safety and Homeland Security, in conjunction with the Attorney General, to develop AI standards for all Executive Branch law enforcement agencies. This includes studying safeguards to protect children from online predators using AI.

AI Task Force

EO 30 also created an AI Task Force that produced a comprehensive strategy report. The Task Force's recommendations have informed subsequent legislative proposals and state agency AI adoption practices.

The Vetoed Comprehensive AI Bill: HB 2094 (2025)

The most significant AI legislation to move through the Virginia General Assembly was House Bill 2094, the High-Risk Artificial Intelligence Developer and Deployer Act. Introduced by Delegate Michelle Maldonado, the bill passed both chambers in February 2025 but was vetoed by Governor Youngkin on March 24, 2025.

What the Bill Would Have Done

HB 2094 would have made Virginia the second state after Colorado to enact a comprehensive AI regulation law. The bill targeted "high-risk" AI systems, defined as those that make or significantly influence consequential decisions about people in areas including:

- Employment and hiring

- Healthcare services

- Housing

- Education enrollment and opportunities

- Insurance

- Financial and lending services

- Legal services

- Parole, probation, and pretrial release

Developer Requirements

AI developers would have been required to:

- Document the system's intended use, known risks, and performance characteristics

- Disclose risks of algorithmic discrimination to deployers

- Provide information about the data used to train the system

- Make available details about the system's capabilities and limitations

Deployer Requirements

Organizations deploying high-risk AI would have been required to:

- Implement a risk management policy

- Conduct detailed impact assessments

- Notify individuals when AI is used in decisions about them

- Allow appeals of AI-driven adverse decisions

- Exercise reasonable care to prevent algorithmic discrimination

Why It Was Vetoed

Governor Youngkin cited several concerns in his veto message. He stated that the regulatory framework "fail[ed] to account for the rapidly evolving and fast-moving nature of the AI industry" and put "an especially onerous burden on smaller firms and startups." Industry analyses estimated compliance costs of approximately $290 million for Virginia's AI innovators, with individual developers facing nearly $30 million in regulatory burden.

Impact of the Veto

The veto left Virginia without comprehensive AI regulation and sent a signal to other states considering similar legislation. The Center for Strategic and International Studies noted that the veto raised broader questions about the viability of state-level comprehensive AI regulation, particularly as federal policy under Executive Order 14365 has pushed toward lighter-touch approaches.

2026 Legislative Session: Most AI Bills Tabled

The 2026 Virginia General Assembly session saw numerous AI bills introduced, but most were tabled until 2027 or carried over for further study.

SB 796: AI Chatbots and Minors Act

Senate Bill 796, the Artificial Intelligence Companion Chatbots and Minors Act, was the session's most successful AI bill. The bill passed the Virginia Senate by a vote of 39 to 1 and applies to operators of chatbots with 500,000 or more monthly active users worldwide.

Key provisions include:

- Requiring chatbot deployers to ensure that social AI companions are not made available to minors

- Addressing suicidal ideation risks associated with AI chatbots

- Establishing notice requirements for users

- Creating incident reporting obligations

- Making violations actionable under the Virginia Consumer Protection Act

However, the House Communications, Technology and Innovation Committee voted to carry SB 796 over to the 2027 session and referred it to the Joint Commission on Technology and Science for further study.

HB 758: Chatbots and Minors (House Version)

House Bill 758, introduced by Delegate Chris Runion, proposed a similar approach requiring chatbot companies to not make products with human-like features available to children and to implement reasonable age verification systems. The bill was left in committee as of February 2026.

Healthcare AI Bills

Delegate Maldonado introduced a narrower version of her vetoed 2025 bill, focusing specifically on the healthcare sector. The bill emphasized transparency and assessment impacts within healthcare settings. However, it was also continued to the 2027 session.

The Joint Commission on Technology and Science had unanimously backed recommendations for healthcare AI bills in late 2025, including requirements for healthcare providers to establish AI system internal standards and transparency rules. These recommendations are expected to inform future legislation.

AI in Education Bills

Two education-focused AI bills showed the most progress in the 2026 session:

Senate Bill 394 would establish a pilot program for practical AI use in public elementary and secondary schools. The bill requires the Board of Education to publish guidance for the "safe, ethical, and equitable use" of AI, with annual reporting and a sunset date of July 1, 2030.

House Bill 1186 takes a more restrictive approach, requiring school boards to create policies prohibiting students from being "required, encouraged, or permitted" to use an AI chatbot for instruction, lessons, or assignments.

These education measures reflect the reality that approximately 85% of Virginia teachers and 86% of students used AI tools during the 2024-2025 school year.

Comprehensive AI Legislation

Delegate Maldonado's broader legislative package included four bills addressing AI disclosure, training data transparency, consumer opt-out options, safety testing, and deepfake regulations. Notable among these was HB 2250, which would have been the first state AI safety bill to introduce "Do Not Train" data designations, Training Data Verification Requests, and Training Data Deletion Requests. These bills were continued to 2027.

AI in Employment: Current Status

Virginia does not currently have enacted legislation specifically governing AI in employment decisions. The vetoed HB 2094 would have addressed this area comprehensively, but its defeat means employers using AI in hiring and workforce decisions operate under existing anti-discrimination frameworks.

Applicable Protections

Virginia employers using AI tools for hiring, evaluation, or termination decisions must still comply with:

- Federal civil rights laws (Title VII, ADA, ADEA) that prohibit discrimination in employment

- The Virginia Human Rights Act, which provides state-level employment discrimination protections

- General duty of care obligations that may apply to AI-driven adverse employment actions

Future Direction

The 2027 legislative session is expected to revisit AI in employment regulation, informed by the study recommendations from the Joint Commission on Technology and Science and the ongoing debate over the appropriate scope of state AI regulation.

AI in Elections: No Enacted Law

Unlike many states, Virginia has not enacted legislation specifically addressing AI-generated deepfakes in elections. Bills proposing disclosure requirements for political deepfakes have been introduced in multiple sessions but have been continued or tabled each time.

The state's existing election law framework addresses traditional forms of election fraud and campaign finance violations, but does not contain AI-specific provisions for synthetic media disclosure or penalties for deceptive AI-generated political content.

Federal AI Policy and Virginia

Executive Order 14365

Federal AI policy under Executive Order 14365 (December 2025) aligns with Virginia's current regulatory approach in some respects, particularly the emphasis on avoiding burdensome regulation of AI development. Governor Youngkin's veto of HB 2094 anticipated many of the themes in the federal executive order.

Virginia's Unique Position

Virginia's relationship with federal AI policy is shaped by several factors:

Northern Virginia tech corridor: The state is home to a significant concentration of technology companies and federal contractors in the Northern Virginia area, making AI regulation particularly consequential for the state's economy.

Federal contracting: Many Virginia-based companies develop AI systems for federal government clients, meaning federal AI procurement standards directly affect the state's business landscape.

Data center infrastructure: Virginia hosts the largest concentration of data centers in the world, particularly in Loudoun County. AI infrastructure development is a major economic driver for the state, creating strong incentives for a business-friendly regulatory environment.

Impact on Pending Bills

The federal framework's emphasis on lighter-touch regulation reinforces the position taken by Governor Youngkin in vetoing HB 2094. However, areas like child safety (relevant to SB 796) and education (relevant to SB 394 and HB 1186) fall within protected carve-outs that allow state regulation.

Virginia's AI Regulatory Landscape

Virginia's approach to AI regulation can be characterized by several key themes:

Pioneer on specific harms: Virginia led the nation on deepfake pornography in 2019 and established strong government AI governance through EO 30 in 2024, demonstrating willingness to act on well-defined AI-related problems.

Resistant to comprehensive regulation: The veto of HB 2094 and the tabling of most 2026 AI bills signals that Virginia's current leadership favors a narrower, sector-specific approach over broad AI regulation.

Study-and-wait approach: Multiple AI bills have been referred to the Joint Commission on Technology and Science for study, suggesting that Virginia is building an evidence base before enacting comprehensive legislation.

Economic considerations front and center: Virginia's position as a major technology hub influences its regulatory calculus, with policymakers weighing the economic impact of AI regulation on the state's tech sector.

Leadership change may shift dynamics: With new gubernatorial leadership expected in 2026, Virginia's approach to AI regulation could shift significantly. The legislature has already passed comprehensive AI regulation once, suggesting there is legislative appetite for stronger oversight.

More Virginia Laws

Explore other Virginia law topics on Recording Law:

- Virginia Recording Laws

- [Virginia Data Privacy Laws](/us-laws/data-privacy-laws/virginia-data-privacy-laws)

- Virginia Whistleblower Laws

- Virginia Sexting Laws

Sources and References

- Va. Code Section 18.2-386.2 - Unlawful dissemination of images(law.lis.virginia.gov).gov

- Va. Code Section 18.2-386.1 - Unlawful creation of image(law.lis.virginia.gov).gov

- Executive Order 30 - Artificial Intelligence (PDF)(governor.virginia.gov).gov

- Governor Youngkin Signs Executive Order on AI(governor.virginia.gov).gov

- VITA - Artificial Intelligence Governance(vita.virginia.gov).gov

- HB 2094 - 2025 Regular Session(lis.virginia.gov).gov

- EO 30 Task Force Report (PDF)(vita.virginia.gov).gov

- JCOTS Limited Study - AI in Healthcare (PDF)(dls.virginia.gov).gov

- Most AI legislation in Virginia tabled until 2027(vpm.org)

- Virginia lawmakers propose AI guardrails for education(virginiamercury.com)