Missouri AI Laws and Regulation (2026)

Missouri stands out as one of the last states in the country without enacted deepfake legislation. As of mid-2025, Missouri was one of only three states, alongside Alaska and Ohio, that had not passed any deepfake-specific laws. While the state has no comprehensive AI statute on the books, Missouri lawmakers introduced a flurry of AI-related bills during the 2025 and 2026 legislative sessions, signaling that regulation may be coming soon.

This guide covers Missouri's current legal landscape for artificial intelligence, pending legislation, attorney general actions, and how federal AI policy fills gaps in state regulation. Whether you are a developer, business owner, or legal professional, this is your complete resource for understanding AI law in the Show-Me State.

This article is for informational purposes only and does not constitute legal advice. Consult a licensed Missouri attorney for guidance on specific situations.

Missouri's Current AI Legal Landscape

Missouri has not enacted a comprehensive law specifically regulating artificial intelligence. Unlike states such as Colorado, California, and Illinois, which have passed broad AI governance statutes, Missouri currently relies on its existing legal framework to address AI-related issues.

What Laws Currently Apply to AI in Missouri

Although no Missouri statute uses the phrase "artificial intelligence" in its operative provisions, several existing laws apply to AI systems and their effects:

- Missouri Merchandising Practices Act (MMPA): The state's primary consumer protection statute, Chapter 407 RSMo, prohibits deceptive and unfair business practices. The Attorney General can bring enforcement actions against companies that use AI in deceptive ways, including misleading chatbots or AI-generated marketing claims.

- Anti-discrimination laws: Missouri's Human Rights Act (Chapter 213 RSMo) prohibits discrimination in employment, housing, and public accommodations. AI tools that produce discriminatory outcomes in hiring or lending may violate these protections.

- Data breach notification: Missouri's data breach notification law (Section 407.1500 RSMo) requires notification when personal information is compromised, which applies to AI systems that process or store personal data.

Why Missouri Has Been Slow to Act

Missouri's cautious approach reflects a broader philosophical stance. The state Legislature has historically favored limited regulation and business-friendly policies. Several factors contribute to the delayed action on AI legislation. The technology has evolved faster than most state legislatures can respond. There is genuine disagreement about whether state-level regulation could stifle innovation. Additionally, federal action (or the prospect of it) has led some states to wait.

However, the 2025 and 2026 sessions show a clear shift. Missouri lawmakers are now actively debating multiple AI bills, with growing bipartisan support for at least targeted regulation.

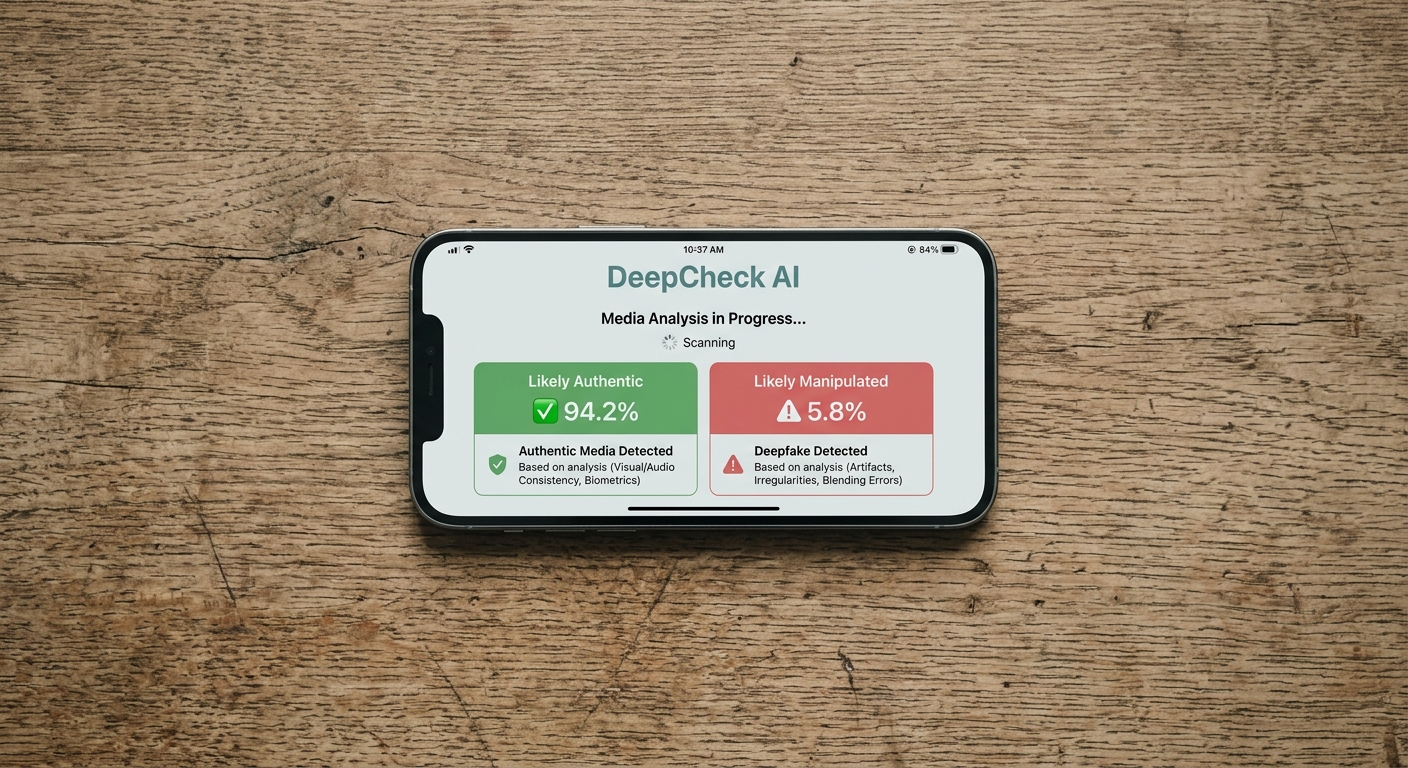

Missouri Has No Deepfake Laws (As of March 2026)

This is Missouri's most significant gap in AI regulation. As of mid-2025, only three states lacked deepfake legislation: Missouri, Alaska, and Ohio. While other states have since enacted laws, Missouri still has no enacted deepfake statute.

This means that under current Missouri law:

- Creating AI-generated intimate images of someone without their consent is not specifically criminalized

- Distributing political deepfakes near elections faces no state-level criminal penalty

- Victims of deepfake exploitation must rely on federal law or general state causes of action like defamation, invasion of privacy, or the MMPA

Federal Protection as a Backstop

The TAKE IT DOWN Act (P.L. 119-12), signed into federal law in May 2025, provides some protection for Missouri residents. This law criminalizes the creation and distribution of nonconsensual intimate deepfakes nationwide, with penalties of up to 3 years imprisonment. However, it does not address political deepfakes or many other forms of harmful AI-generated content.

Pending Deepfake Bills in Missouri

Missouri's Legislature is working to close this gap through several bills in the 2026 session.

The Taylor Swift Act (SB 1117)

Senate Bill 1117, commonly known as the "Taylor Swift Act," is Missouri's most prominent pending deepfake bill. Introduced by Senator Fitzwater, the bill is named after pop star Taylor Swift, who became a victim of nonconsensual AI-generated intimate images in January 2024.

What the Bill Would Do

SB 1117 would establish both civil and criminal penalties for creating or sharing nonconsensual intimate digital depictions. Specifically, the bill creates a cause of action against anyone who discloses a digital depiction of someone under 18, or an intimate digital depiction of any individual, when the person knows or recklessly disregards that the depicted individual has not consented.

Penalties Under SB 1117

| Category | Penalty |

|---|---|

| First offense (intimate deepfake of adult) | Class E felony |

| Depiction of a minor | Enhanced felony charges |

| Civil damages | Victims may sue for emotional distress and compensatory damages |

The bill passed the Senate Judiciary Committee on February 4, 2026, and continues to advance through the legislative process.

Civil Remedies

An important feature of SB 1117 is its civil cause of action. Victims of sexually explicit deepfakes could pursue financial compensation for damages, including emotional distress. For AI-generated depictions of children and teens, a legal guardian would be able to file suit even if the depiction was not sexual in nature.

AI in Elections: SB 509 and SB 1012

Missouri has introduced two bills targeting AI use in political communications.

SB 509: AI Disclosure in Elections (2025)

Senate Bill 509 would create new provisions for the use of artificial intelligence in elections. The bill requires specific disclaimers for political communications that use AI-generated content under certain conditions:

- Depicting a real person doing something they did not actually do

- Manipulating a candidate's voice or actions to make them appear to say or do something they did not

- Creating content intended to harm a candidate or mislead voters

The required disclaimer must clearly state that the content was created with generative AI and is not authentic. The bill specifies how the disclaimer must appear across different media types, including print, television, internet, and audio communications.

As of February 2025, SB 509 was referred to the Senate Local Government, Elections and Pensions Committee.

SB 1012: Artificially Generated Content (2026)

Senate Bill 1012 takes a broader approach, creating new provisions relating to artificially generated content with penalty provisions. The bill would amend chapters 130 and 573 RSMo to address AI-generated content in both electoral and other contexts.

The legislation would require watermarks or disclaimers on AI-generated content and target deceptive use of AI to create false depictions of real people. Anyone creating AI content depicting a real person would need to obtain that person's consent, with exceptions for parody and satire.

The AI Non-Sentience and Responsibility Act

One of Missouri's most distinctive AI proposals is HB 1462, the AI Non-Sentience and Responsibility Act, introduced by Representative Phil Amato of St. Louis. The bill was refiled for the 2026 session as HB 1769.

Key Provisions

The bill makes several sweeping declarations about AI's legal status:

- AI systems are not sentient beings and cannot be granted legal personhood

- AI cannot get married, own property, or serve on corporate boards of directors

- AI cannot serve as a manager or director of any company

- All assets associated with an AI system belong to the humans or organizations responsible for its development, deployment, or operation

Liability Framework

The bill places full legal responsibility for AI actions on human owners, developers, and manufacturers. If an AI system causes harm, liability rests with human actors. The bill includes provisions that can pierce corporate veils in cases of intentional evasion of responsibility.

Developers must prioritize safety, conduct risk assessments, and cannot use labels like "ethically trained" to avoid liability. The bill also defines "emergent properties," addressing the concept of unanticipated behaviors that AI systems may exhibit.

Why This Bill Matters

While the idea of banning AI from marriage may sound unusual, the bill addresses a real legal question. As AI systems become more sophisticated, questions about their legal status will only intensify. HB 1462/1769 would preemptively clarify that in Missouri, AI tools remain tools, and their creators and operators bear responsibility for what those tools do.

AI and Mental Health: SB 1444

Senate Bill 1444 addresses a growing concern: AI chatbots that present themselves as mental health professionals.

What the Bill Prohibits

The bill provides that no person or entity that develops or deploys AI in Missouri shall advertise or represent to the public that the AI:

- Is or is able to act as a mental health professional

- Is capable of providing therapy services

- Is capable of providing psychotherapy services

- Is capable of providing a mental health diagnosis

Enforcement

A violation under SB 1444 would be considered an unlawful practice under the Missouri Merchandising Practices Act. The Attorney General would have enforcement authority, and any individual could report violations. If the Attorney General finds a violation occurred, they must commence a civil action.

Related bills HB 2318 and HB 2368 propose similar protections, reflecting bipartisan concern about AI overreach in mental health services.

Attorney General's Algorithmic Choice Rule

Missouri's most concrete AI-related regulatory action to date came not from the Legislature but from the Attorney General's office.

The Rule

In January 2025, Attorney General Andrew Bailey announced a regulation requiring Big Tech companies to offer users a choice of content moderation algorithms. The AG called it the first rule of its kind in the nation.

How It Works

Under the rule, social media platforms must:

- Provide users with an option to use the platform's own content moderation algorithm OR choose from an independent content moderator

- Offer this choice upon account activation, with no default selection

- Renew the opportunity to choose at least every six months

- Not favor their own algorithm over competitors by limiting a third-party moderator's functionality

Platforms may set access limits on third-party moderators only to the extent necessary to protect trade secrets, proprietary processes, privacy information, and platform security.

Legal Basis

The AG's office used its consumer protection authority, arguing that tech companies engage in deceptive and unfair trade practices by implementing content moderation algorithms without offering alternatives. The rule was subject to a public comment process.

AI in Employment

Missouri has not enacted any laws specifically regulating AI in hiring, automated employment screening, or workplace decision-making. There is no Missouri equivalent to:

- New York City's Local Law 144 (bias audits for automated employment tools)

- Illinois's AI Video Interview Act

- Colorado's comprehensive AI law covering employment decisions

- California's proposed and enacted AI employment regulations

However, Missouri's Human Rights Act and federal anti-discrimination laws (Title VII, ADA, ADEA) apply to employment decisions regardless of whether they are made by humans or AI systems. The EEOC has issued guidance confirming that employers are liable for discriminatory outcomes from AI hiring tools.

No pending 2026 bills specifically target AI in employment decisions, though the AI Non-Sentience and Responsibility Act's broad liability framework would apply to AI systems used in the workplace.

AI in Healthcare

Beyond the mental health provisions of SB 1444, Missouri has not enacted healthcare-specific AI regulations. The state has no equivalent to laws in other states requiring disclosure of AI use in medical diagnoses or prohibiting AI-only treatment decisions.

Federal regulations, including HIPAA and FDA oversight of AI-enabled medical devices, provide the primary regulatory framework for AI in Missouri's healthcare sector.

How Federal AI Policy Affects Missouri

With limited state-level AI regulation, federal policy plays an outsized role in Missouri.

Trump Executive Order 14179

President Trump's Executive Order 14179 (January 23, 2025) revoked the Biden administration's EO 14110 and adopted a deregulatory approach prioritizing American AI innovation and competitiveness. This aligns with Missouri's historically business-friendly regulatory environment.

The TAKE IT DOWN Act

The federal TAKE IT DOWN Act, signed May 2025, is particularly important for Missouri because the state has no deepfake law. This federal statute criminalizes nonconsensual intimate deepfakes with up to 3 years imprisonment, providing Missouri residents with legal protection that state law does not yet offer.

The One Big Beautiful Bill Act

The proposed federal legislation includes provisions that could impose a moratorium on state AI legislation. If enacted, this would affect Missouri's pending AI bills and could preempt future state-level regulation.

What Missouri's Gaps Mean for Residents and Businesses

Missouri's lack of AI-specific laws creates a distinct legal environment.

For Individuals

Without deepfake laws, Missouri residents who become victims of AI-generated intimate images must rely on federal law (the TAKE IT DOWN Act), general state tort claims (defamation, invasion of privacy), or the MMPA's consumer protection framework. These alternatives are less targeted and often harder to pursue than deepfake-specific statutes.

For Businesses

Missouri businesses deploying AI face fewer state-specific compliance requirements than businesses in heavily regulated states like Colorado, California, or Illinois. However, businesses should not interpret the absence of state AI law as a license to deploy AI without safeguards. Federal laws, the MMPA, and anti-discrimination statutes all apply. Businesses operating across state lines must also comply with AI laws in other jurisdictions.

Preparing for Coming Changes

Given the volume of AI bills in the 2025 and 2026 sessions, Missouri businesses should prepare for new regulations. Companies would be wise to conduct internal audits of AI systems, establish AI governance policies, and monitor the Legislature's progress on the Taylor Swift Act, the AI Non-Sentience and Responsibility Act, and election-related AI disclosure requirements.

More Missouri Laws

- Missouri Recording Laws

- [Missouri Data Privacy Laws](/us-laws/data-privacy-laws/missouri-data-privacy-laws)

- Missouri Surveillance Camera Laws

- Missouri Background Check Laws

- Missouri Whistleblower Laws

- All US AI Laws

Sources and References

- Missouri SB 509 - AI in Elections(senate.mo.gov).gov

- Missouri HB 1462 - AI Non-Sentience and Responsibility Act(documents.house.mo.gov).gov

- Missouri SB 1117 - Taylor Swift Act (Deepfakes)(senate.mo.gov).gov

- Missouri SB 1444 - AI in Mental Health(senate.mo.gov).gov

- Missouri SB 1012 - Artificially Generated Content(senate.mo.gov).gov

- Missouri AG Algorithmic Freedom Rule(ago.mo.gov).gov

- 47 States Have Enacted Deepfake Legislation - Ballotpedia(news.ballotpedia.org)

- Missouri Lawmakers Consider Taylor Swift Act(kctv5.com)

- Bills Targeting Deepfakes Spark Debate Among Missouri Lawmakers(missouriindependent.com)

- Missouri Legislators Want AI Regulations(kcur.org)

- Senator Fitzwater Revives Taylor Swift Act(missourinet.com)