North Dakota AI Laws and Regulation (2026)

Overview of North Dakota AI Laws

North Dakota took decisive action on artificial intelligence during its 2025 legislative session, enacting multiple laws addressing deepfakes, political AI disclosures, healthcare AI, and child protection. Because North Dakota holds legislative sessions only in odd-numbered years, the 2025 session represented the state's first opportunity to address the rapid growth of AI technology through legislation.

Governor Kelly Armstrong signed four AI-specific bills into law during the session, covering political ad disclosures, fraudulent deepfakes, nonconsensual synthetic intimate images, and AI-generated child sexual abuse material. Additionally, Senate Bill 2280 addressed AI use in healthcare insurance decisions.

The state also established official AI guidelines for state agencies through the North Dakota Information Technology department, creating a governance framework for how state government uses AI tools.

This article covers North Dakota's enacted AI legislation, deepfake regulations, healthcare AI rules, and state government AI governance. This information is current as of March 2026, but you should consult an attorney for advice specific to your situation.

AI in Political Communications: HB 1167

House Bill 1167 addresses the use of artificial intelligence in political advertising. The law, which passed on April 14, 2025, creates a new section in Chapter 16.1-10 of the North Dakota Century Code requiring disclosure when AI is used to create political content.

Disclosure Requirements

Under HB 1167, any political communication or political advertisement created wholly or in part by artificial intelligence tools must include a prominent disclaimer reading: "This content generated by artificial intelligence."

The law applies to:

- Television and video advertisements

- Radio and audio advertisements

- Print materials and mailers

- Digital and online advertisements

- Social media political content

Purpose and Context

North Dakota lawmakers introduced HB 1167 in response to growing concerns about AI-generated political content that could mislead voters. The bill recognizes that as AI tools become more sophisticated, voters need transparency about whether the political content they see was created or modified by artificial intelligence.

The law places North Dakota among the 28 states that have enacted laws related to deepfakes used in political communications as of early 2026.

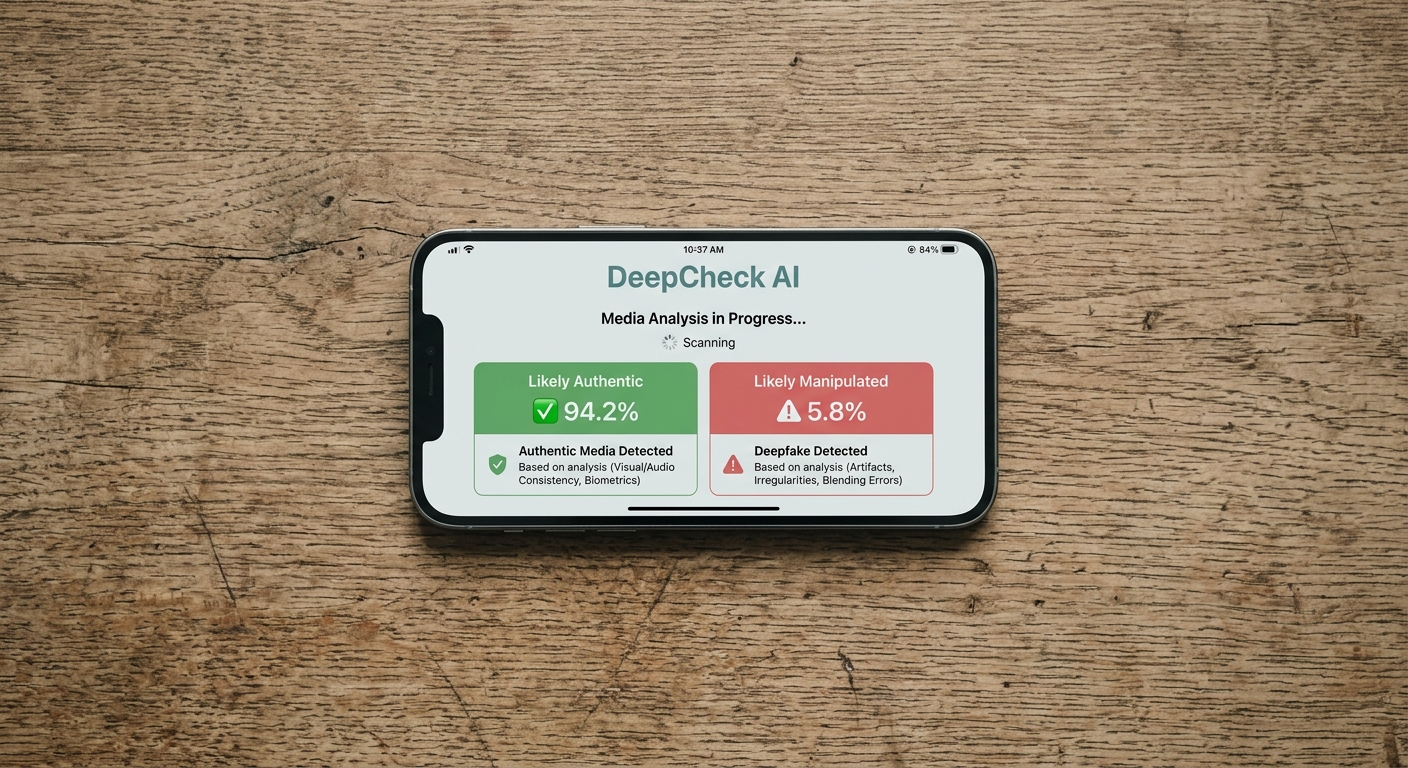

Fraudulent Deepfakes: HB 1320

House Bill 1320 creates a broad prohibition against the fraudulent use of deepfake videos and images. The law adds a new section to Chapter 12.1-31 of the North Dakota Century Code (Corrupt Practices).

What the Law Prohibits

HB 1320 makes it illegal to create, possess, or release deepfake videos and images without the consent of the person featured. The law defines a "deepfake video or image" as any digitally altered or AI-generated content that depicts an individual's likeness or voice without their consent, with the intent to deceive.

Penalties

| Offense | Classification | Maximum Penalty |

|---|---|---|

| Creating a deepfake without consent | Class A misdemeanor | 360 days imprisonment, $3,000 fine |

| Possessing a deepfake without consent | Class A misdemeanor | 360 days imprisonment, $3,000 fine |

| Releasing a deepfake without consent | Class A misdemeanor | 360 days imprisonment, $3,000 fine |

A Class A misdemeanor is the most serious misdemeanor classification in North Dakota, carrying a maximum sentence of 360 days of imprisonment and a $3,000 fine.

Scope and Intent

Representative Josh Christy, R-Fargo, the prime sponsor of the bill, explained that deepfakes pose a threat to North Dakotans because it has become increasingly difficult to determine what is real and what is fake. The bill's intent is to prevent anyone from using another person's likeness without permission, whether for fraud, harassment, or deception.

Nonconsensual Synthetic Intimate Images: HB 1351

House Bill 1351 specifically targets the creation and distribution of sexually explicit deepfakes without consent. Governor Armstrong signed the bill into law, and it took effect on August 1, 2025.

Criminal Penalties

HB 1351 makes it a misdemeanor to create, possess, and distribute sexually expressive images, including real, altered, or computer-generated deepfakes, that show nude or partially denuded figures without the depicted person's consent.

Civil Remedies

Beyond criminal penalties, HB 1351 provides significant civil remedies for victims:

| Civil Remedy | Amount | Details |

|---|---|---|

| Statutory damages | Up to $10,000 | Available per violation |

| Disgorgement | Variable | Defendant must surrender any money gained through distribution |

| Attorney fees | Variable | Recoverable by prevailing plaintiff |

The civil remedy provision allows victims of nonconsensual synthetic intimate images to file lawsuits to recover up to $10,000 in statutory damages. Plaintiffs can also recover any money the defendant gained through distributing the images.

Relationship to HB 1320

While HB 1320 broadly addresses fraudulent deepfakes, HB 1351 specifically targets sexually explicit synthetic content. The two laws work together to provide both criminal and civil remedies for different types of deepfake harms. A person who creates a sexually explicit deepfake could potentially face charges under both statutes.

AI-Generated Child Sexual Abuse Material: HB 1386

House Bill 1386 addresses one of the most serious AI-related crimes: the creation and possession of AI-generated child sexual abuse material (CSAM). The law was introduced by Representative Josh Christy, R-Fargo.

Why This Law Was Needed

The number of reports of child sexual abuse materials in North Dakota has tripled since 2020, and state experts link the spike in part to realistic AI-generated content. Before HB 1386, investigators encountered AI-generated child abuse cases that could not be effectively prosecuted under existing law because the content did not depict real children.

Criminal Penalties

HB 1386 establishes a tiered penalty structure based on the nature of the content and the age of the depicted minor:

| Offense | Classification | Maximum Sentence | Maximum Fine |

|---|---|---|---|

| Possession of AI-generated CSAM | Class C felony | 5 years imprisonment | $10,000 |

| Content involving prepubescent minor or child age 12 or younger | Class B felony | 10 years imprisonment | $20,000 |

| Content depicting violence or bestiality | Class B felony | 10 years imprisonment | $20,000 |

Key Definitions

The law addresses the challenge of determining the "age" of fictitious AI-generated children. Because the content may involve entirely fabricated individuals, the law specifies that the ages of depicted children can be determined by secondary sex characteristics shown in the content. This approach allows prosecutors to pursue cases even when no real child was depicted.

Interaction with Federal Law

HB 1386 complements federal prohibitions on child sexual abuse material. While federal law under 18 U.S.C. Section 2256 already addresses computer-generated child pornography, state-level prosecution provides an additional tool for law enforcement, particularly for cases that may not meet federal prosecution thresholds.

Healthcare AI Regulation: SB 2280

Senate Bill 2280 is North Dakota's most significant healthcare AI law. Governor Kelly Armstrong signed the bill on April 23, 2025, and it takes effect January 1, 2026.

Core Requirement

The law mandates that licensed physicians, not AI systems, must make prior authorization decisions for medical treatments. Insurance companies cannot use artificial intelligence algorithms as the sole basis for denying, delaying, or modifying healthcare coverage.

Decision Timelines

SB 2280 establishes strict deadlines for insurance companies to respond to prior authorization requests:

| Request Type | Decision Deadline | Default if Missed |

|---|---|---|

| Non-urgent services | 7 calendar days | Automatically authorized |

| Urgent services | 72 hours | Automatically authorized |

If an insurer fails to respond within the required timeframe, the request is considered authorized by default. This "default approval" provision prevents insurers from using delays to deny coverage.

Physician Review Requirements

The law requires that:

- Adverse determinations must be reviewed by licensed physicians or dentists

- Reviewing physicians must be qualified in the relevant medical specialty

- Prior authorization review organizations must make their requirements transparent

- Clear website information about prior authorization criteria must be publicly available

Legislative Support

SB 2280 passed the North Dakota legislature with near-unanimous bipartisan support. The bill passed unanimously in the House and with only minor opposition in the Senate, reflecting broad agreement that AI should not replace physician judgment in healthcare coverage decisions.

Impact on Healthcare

Healthcare organizations in North Dakota praised the law as a significant reform. Prior authorization has long been a source of friction between healthcare providers and insurance companies, and the increasing use of AI to automate these decisions has amplified concerns about patients receiving denials without meaningful human review.

State Government AI Guidelines

The North Dakota Information Technology department (NDIT) has published official AI guidelines for state government use. These guidelines provide a governance framework that applies to all executive branch state agencies, including the University Systems Office.

Scope and Purpose

The NDIT AI guidelines outline best practices for:

- Secure use of AI technologies

- Privacy protection when using AI systems

- Ethical considerations in AI deployment

- Responsible innovation in government AI applications

State agencies outside NDIT's direct scope are encouraged to adopt the guidelines or use them as a framework for developing their own AI policies.

Key Definitions

The guidelines define three core AI concepts for state employees:

- Artificial Intelligence (AI): A field in computer science that focuses on independent decisions based on supervised and unsupervised learning

- Machine Learning (ML): A subfield of AI focused on algorithms and statistical models for independent decisions, while still requiring human guidance

- Large Language Models (LLMs): AI systems trained on large text datasets to understand existing content and generate original content

Privacy Considerations

The guidelines highlight important privacy risks associated with AI use in government. Data entered into public AI services is not secure, and public AI services may incorporate input data into their learning models. This means sensitive government data could potentially be exposed as output to other users if entered into public AI tools.

State employees are advised to avoid entering sensitive or confidential information into public AI services and to use approved, secure AI tools for government work.

AI in Employment

North Dakota has not enacted specific legislation governing the use of AI in employment and hiring decisions. Employers in the state using AI-powered hiring tools, resume screening systems, or automated evaluation processes must comply with existing federal anti-discrimination laws.

Federal Protections

Without state-specific AI employment legislation, North Dakota employers are governed by:

- Title VII of the Civil Rights Act prohibiting employment discrimination

- The Americans with Disabilities Act protecting candidates with disabilities from AI screening bias

- The Age Discrimination in Employment Act protecting workers over 40

- Equal Employment Opportunity Commission guidance on AI in employment decisions

Future Considerations

As AI hiring tools become more prevalent, North Dakota may consider employment-specific AI legislation in future legislative sessions. The state's next regular legislative session is scheduled for 2027, when lawmakers will have the opportunity to address any emerging concerns about AI in the workplace.

Federal AI Policy and North Dakota

Executive Order 14365

President Trump's Executive Order 14365, signed December 11, 2025, establishes federal AI policy that intersects with state regulatory efforts. The order creates mechanisms for reviewing state AI laws and conditions certain federal funding on states' regulatory approaches.

Impact on North Dakota Laws

North Dakota's enacted AI laws interact with the federal framework in several ways:

Deepfake laws (HB 1320, HB 1351, HB 1386): These laws address criminal conduct and child safety, areas where states have traditional authority. The child safety provisions of HB 1386 in particular fall within explicit federal carve-outs.

Political AI disclosure (HB 1167): Election regulation is primarily a state function, though federal preemption concerns could arise if the disclosure requirements are deemed to burden AI development.

Healthcare AI (SB 2280): Insurance regulation has traditionally been a state domain under the McCarran-Ferguson Act, providing strong legal footing for North Dakota's healthcare AI restrictions.

State government AI guidelines (NDIT): Guidelines governing state agency AI use fall squarely within states' authority over their own government operations.

Comparison: North Dakota's AI Approach

North Dakota's 2025 legislative session produced one of the most productive AI legislative outputs of any state that year. The state's approach is notable for several reasons:

Comprehensive coverage: By enacting four separate bills addressing different aspects of deepfake technology, North Dakota created a layered regulatory framework rather than relying on a single omnibus bill.

Healthcare focus: SB 2280 places North Dakota among the early states to specifically restrict AI in healthcare insurance decisions, a trend that is gaining momentum nationally.

Bipartisan support: All of North Dakota's AI bills received strong bipartisan support, with SB 2280 passing unanimously in the House.

Biennial session constraint: Because North Dakota meets only every two years, the 2025 session represented the state's only opportunity to address AI regulation until 2027. This constraint may have contributed to the volume of AI legislation produced.

More North Dakota Laws

Explore other North Dakota law topics on Recording Law:

- North Dakota Recording Laws

- [North Dakota Data Privacy Laws](/us-laws/data-privacy-laws/north-dakota-data-privacy-laws)

- North Dakota Background Check Laws

- North Dakota Biometric Privacy Laws

- North Dakota Data Breach Notification Laws

Sources and References

- HB 1167 - AI Disclosure Statements in Political Communications(ndlegis.gov).gov

- HB 1320 - Prohibiting Deepfake Videos and Images(ndlegis.gov).gov

- HB 1351 - Nonconsensual Synthetic Intimate Images(ndlegis.gov).gov

- HB 1386 - AI-Generated Child Sexual Abuse Material(ndlegis.gov).gov

- SB 2280 - Prior Authorization Reform and AI in Healthcare(ndlegis.gov).gov

- NDIT Artificial Intelligence Guidelines for State Agencies(ndit.nd.gov).gov

- Governor Armstrong Signs Bill to Check AI Health Care Decisions(inforum.com)

- North Dakota House Considers Bills on AI in Political Ads, Deepfakes(northdakotamonitor.com)

- Child Sex Abuse Material Reports Triple in North Dakota(grandforksherald.com)

- AI Deepfake Policy in North Dakota - Ballotpedia(ballotpedia.org)

- NCSL Artificial Intelligence 2025 Legislation Tracker(ncsl.org)

- Essentia Health Applauds North Dakota Prior Authorization Reform(essentiahealth.org)