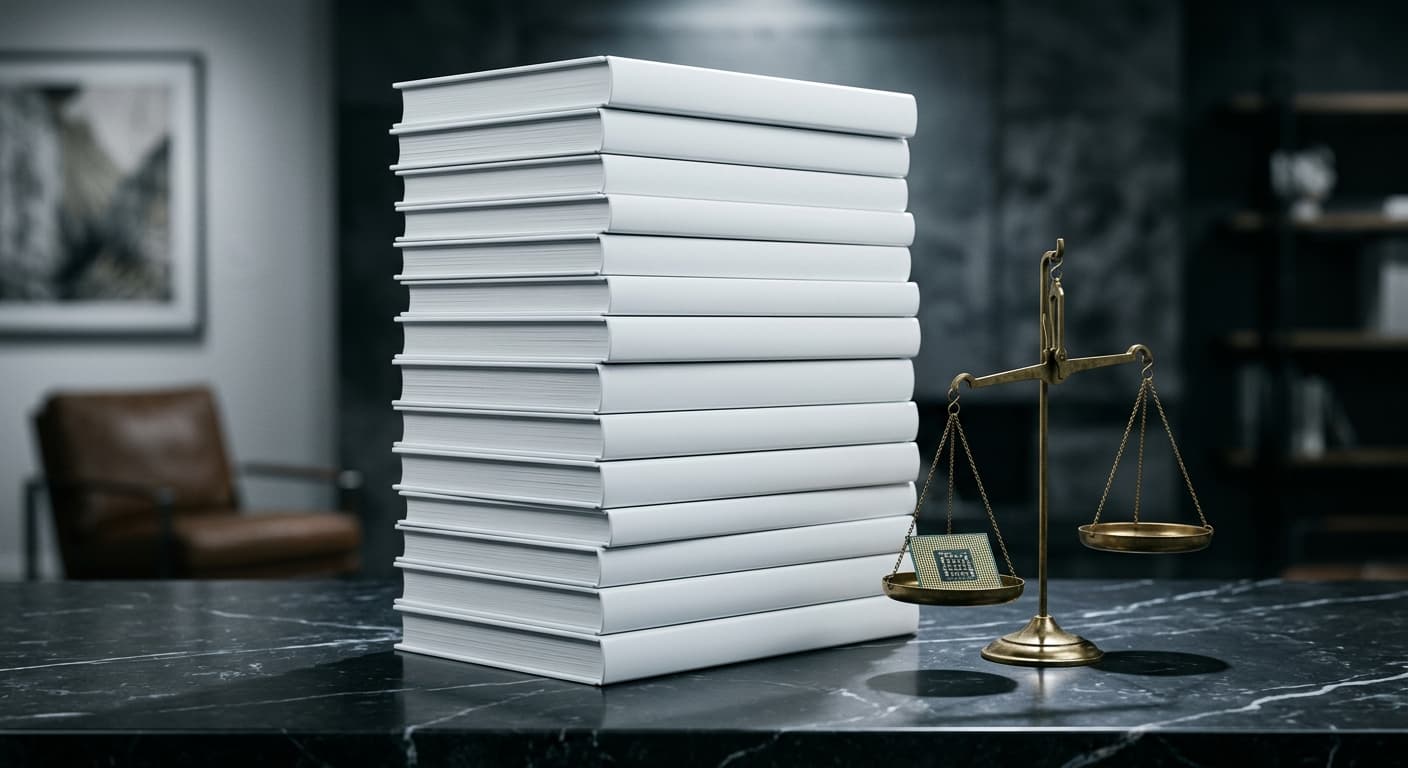

Don't Steal This Book: 10,000 Authors Publish Empty Novel to Protest AI Copyright Theft

Nearly 10,000 authors, including Nobel laureate Kazuo Ishiguro, published a book with 88 pages of names followed by nothing but blank pages. Distributed at the London Book Fair in March 2026, "Don't Steal This Book" is a protest against AI companies that scraped copyrighted creative works without permission or payment to train their models.

The stunt landed during a critical window. The UK government was weighing whether to upend copyright law in favor of AI companies, a $3.1 billion music piracy lawsuit had been filed against Anthropic, and Anthropic itself had accused three Chinese AI labs of stealing its own model outputs. The message from the creative community was blunt: the AI industry is built on stolen work.

The Empty Book

"Don't Steal This Book" is a physical object designed to make an argument. The first 88 pages list the names of the contributing authors. After that, every page is blank.

The symbolism is intentional. If AI companies continue to train large language models on copyrighted novels, short stories, and other creative writing without licensing or compensation, the blank pages represent the future of authorship: writers who can no longer sustain careers because machines trained on their work have replaced the market for it.

The book was organized by Ed Newton-Rex, a composer and technologist who left his role at an AI company after growing disillusioned with how the industry treated creative intellectual property. Newton-Rex founded Fairly Trained, an organization that certifies AI models built on properly licensed data.

"The AI industry is built on stolen work, taken without permission or payment," Newton-Rex told The Guardian. He described the book as a direct message to the UK government, which was then considering changes to copyright law that could benefit AI developers at the expense of creators.

Among the nearly 10,000 contributing authors were Kazuo Ishiguro (Nobel Prize in Literature, 2017), Richard Osman, Alan Moore, Marian Keyes, Philippa Gregory, Malorie Blackman, and Mick Herron. Approximately 1,000 physical copies were distributed across the London Book Fair, held March 10-12, 2026.

The UK Copyright Battle

The protest's timing was strategic. The UK government was required to submit both an economic impact assessment and a comprehensive progress report on copyright and AI to Parliament by March 18, 2026, under Section 137 of the Data (Use and Access) Act.

What the Government Proposed

In December 2025, the UK government had identified a broad data mining exception with an opt-out mechanism as its preferred approach. Under this framework, AI companies could train models on any lawfully accessed copyrighted material, and rights holders who objected would need to actively opt out.

How Creators Responded

The proposal was overwhelmingly rejected by the creative industries. During the public consultation (December 2024 through February 2025), 88% of over 11,500 respondents supported requiring licenses in all cases. Only 3% backed the government's preferred opt-out model.

A taskforce of 80 people manually analyzed all responses without using AI tools. Over 3,000 of the responses were based on template letters organized by creative industry groups, reflecting coordinated opposition.

The Government's Reversal

On March 18, 2026, the UK government announced it had reversed course. It withdrew support for the opt-out framework and stated it now has "no preferred option" for reform. The government committed to continuing evaluation of policy options, monitoring international developments, and exploring licensing mechanisms for smaller organizations.

Three core principles were outlined for any future framework: rights holders should control how their work is used and receive compensation, developers need lawful access to content for training, and transparency requirements must give visibility into what data models are trained on.

The $3.1 Billion Anthropic Music Lawsuit

While authors protested in London, the courtroom fight over AI and copyright was escalating in the United States.

In January 2026, a coalition of music publishers led by Universal Music Group and Concord Music Group filed suit against Anthropic seeking more than $3 billion in damages. The complaint alleges Anthropic illegally downloaded over 20,000 copyrighted songs, including sheet music, lyrics, and musical compositions, to train its Claude AI model.

According to the filing, Anthropic built a permanent internal library of copyrighted texts sourced from so-called pirate repositories rather than acquiring licensed copies. The case evolved from an earlier, smaller lawsuit covering approximately 500 works. Through the discovery process, the publishers claim they found evidence of a far larger operation.

If the damages are awarded in full, the case would rank as one of the largest non-class action copyright cases in U.S. history.

The Bartz Settlement and Fair Use Precedent

The music lawsuit follows the landmark Bartz v. Anthropic settlement, which resolved a class action brought by authors. In that case, Judge William Alsup issued a critical distinction that has shaped the legal landscape: training AI models on copyrighted content may qualify as fair use, but acquiring that content through piracy does not.

The case settled for $1.5 billion, with impacted writers receiving approximately $3,000 per work for roughly 500,000 copyrighted works. The ruling established an important boundary: AI companies can argue fair use for the training process itself, but they must obtain their training data through legitimate channels.

This distinction matters because it shifts the legal battleground. The question is no longer just "can AI companies use copyrighted works to train models?" but "how did they acquire those works in the first place?"

When AI Companies Get Stolen From

In a twist that underscored the complexity of intellectual property in the AI era, Anthropic itself accused three Chinese AI laboratories of stealing its work.

In February 2026, Anthropic publicly alleged that DeepSeek, Moonshot AI, and MiniMax had orchestrated industrial-scale "distillation" campaigns targeting Claude. The three labs allegedly created approximately 24,000 fraudulent accounts and generated over 16 million exchanges designed to systematically extract Claude's reasoning capabilities.

MiniMax was the most prolific, generating over 13 million exchanges focused on coding and tool use. Moonshot AI accounted for more than 3.4 million exchanges. DeepSeek's operation was smaller (over 150,000 exchanges) but strategically targeted Claude's reasoning and reinforcement learning capabilities.

The irony was not lost on commentators: an AI company accused of building its models on pirated creative works was itself alleging that foreign competitors had pirated its AI outputs. As of March 2026, the U.S. Department of Justice indicated it was investigating potential trade secret and computer fraud violations, though enforcement against companies operating primarily outside U.S. jurisdiction presents significant practical obstacles.

The Legal Landscape for AI and Copyright

The fight over AI training and copyright is playing out across multiple legal fronts simultaneously.

U.S. Fair Use Uncertainty

Courts have split on whether AI training constitutes fair use under 17 U.S.C. 107. The Bartz ruling allowed fair use for training but drew the line at pirated source material. In Kadrey v. Meta Platforms, the court noted that AI models can "flood the market with similar texts and stifle competition," identifying potential market harm that could undermine fair use claims.

On March 2, 2026, the U.S. Supreme Court denied certiorari in Thaler v. Perlmutter, confirming that AI-generated content cannot be copyrighted without human authorship. This ruling establishes that while AI companies may (or may not) have the right to use copyrighted works as training data, the outputs those models generate do not themselves receive copyright protection.

International Approaches

Countries are taking divergent paths:

| Jurisdiction | Approach |

|---|---|

| United States | Case-by-case fair use analysis; no comprehensive AI copyright legislation |

| United Kingdom | Reversed preferred opt-out model; now has "no preferred option" |

| European Union | Text and data mining exception under DSM Directive, with opt-out for commercial use |

| Japan | Broad exception for AI training under 2018 copyright amendments |

The lack of international consensus means AI companies operate under different rules depending on where they and their training data are located. This creates both legal uncertainty and opportunities for jurisdiction shopping.

What This Means for Digital Rights

The "Don't Steal This Book" protest and the lawsuits surrounding it sit at the intersection of copyright, data privacy, and digital rights. Several principles are emerging from the legal battles.

Consent matters. Whether it is authors objecting to their books being scraped, musicians objecting to their songs being pirated, or users objecting to their recordings being reviewed by contractors, the common thread is that people and organizations want control over how their creative output is used.

Piracy is still piracy. The Bartz settlement drew a bright line: however courts ultimately resolve the fair use question for AI training, obtaining training data through pirated or unauthorized channels is not protected. This principle applies equally to the Anthropic music case and to the DeepSeek distillation allegations.

Transparency is the minimum. Both the UK government's revised framework and the EU's DSM Directive emphasize transparency requirements. AI companies may eventually be required to disclose what copyrighted works they used for training, giving rights holders the information they need to enforce their rights.

The blank pages of "Don't Steal This Book" ask a question that legislatures, courts, and AI companies have not yet fully answered: who gets to profit from creative work, and who decides?

Consult an attorney for advice specific to your situation, particularly if your copyrighted work may have been used in AI training without authorization.

Sources and References

- UK Copyright and AI Progress Report - GOV.UK(gov.uk).gov

- Generative AI and Copyright Law - Congressional Research Service(congress.gov).gov

- 17 U.S.C. 107 - Fair Use(copyright.gov).gov

- Music publishers sue Anthropic for $3B over piracy of 20,000 works - TechCrunch(techcrunch.com)

- Anthropic claims 3 Chinese companies ripped it off - Fortune(fortune.com)

- 10,000 Authors Protest AI With Empty Book - Deadline(deadline.com)

- Authors protest AI at London Book Fair - Euronews(euronews.com)

- UMG Sues Anthropic for $3 Billion - Billboard(billboard.com)

- AI Copyright Cases Update 2026 - Norton Rose Fulbright(nortonrosefulbright.com)

- UK Government Reverses AI Copyright Approach - Lewis Silkin(lewissilkin.com)